The GitHub Action: Governed PRs in 5 Minutes

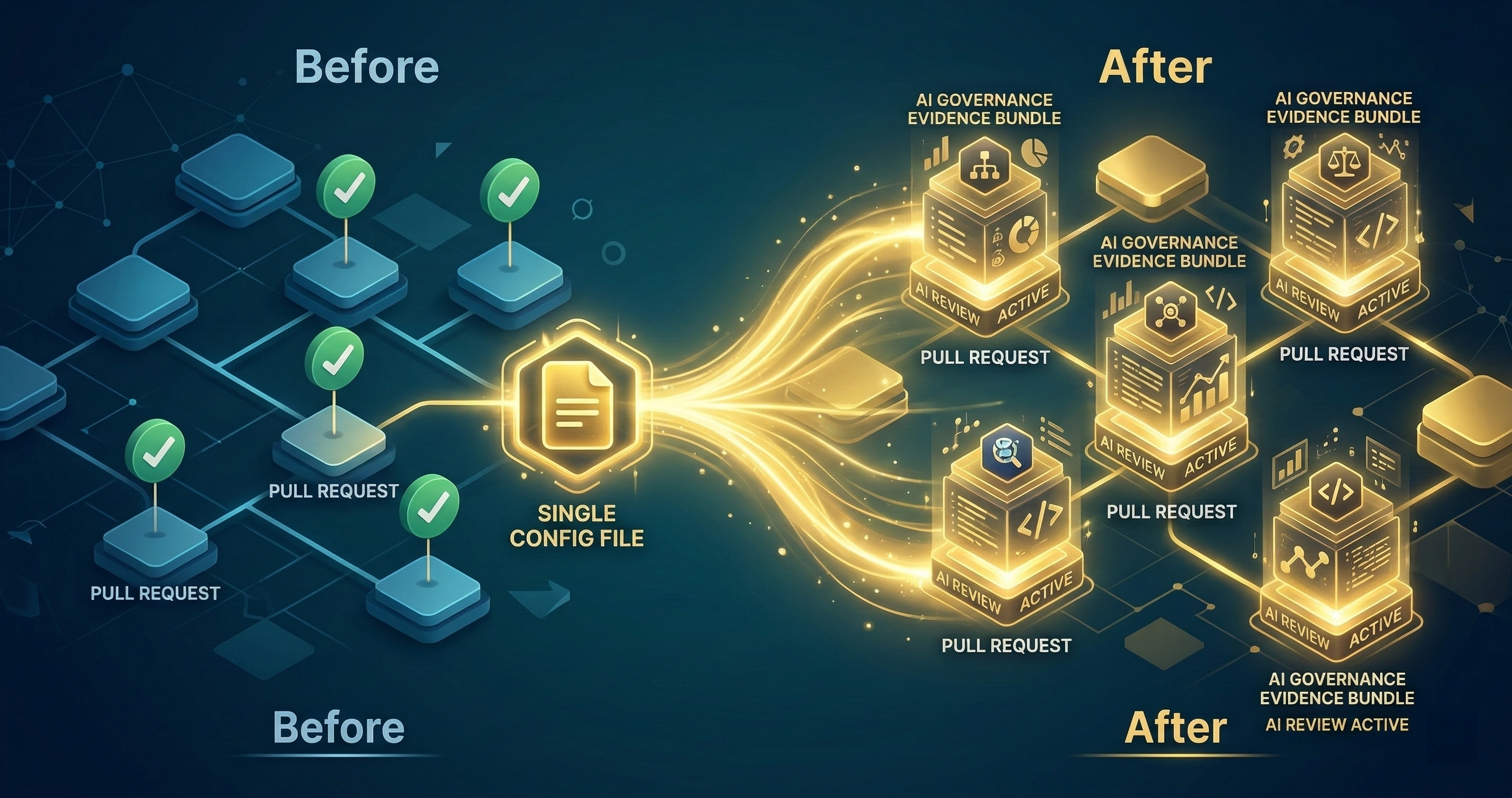

Five minutes. One YAML file. Every PR gets risk-tiered, evidence-sealed, and audit-ready. No infrastructure to deploy. No vendor to onboard.

Five minutes. One YAML file. Every PR in your repository gets risk-tiered, evidence-sealed, and audit-ready. No infrastructure to deploy. No vendor to onboard.

I spent months building the governance architecture. Risk tiers, AI councils, evidence bundles, hash chains. All of it was useless until someone could install it without reading a dissertation. The GitHub Action is the answer to “how do I try this right now?”

One YAML File

Add this to .github/workflows/codeguard.yml:

name: CodeGuard Review

on:

pull_request:

types: [opened, synchronize]

jobs:

review:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0

- uses: DNYoussef/codeguard-action@v1

with:

risk_threshold: L2

auto_approve: L1

models: claude-sonnet,gpt-4o

env:

ANTHROPIC_API_KEY: ${{ secrets.ANTHROPIC_API_KEY }}

OPENAI_API_KEY: ${{ secrets.OPENAI_API_KEY }}

That’s it. Push this file. Open a PR. Governance starts.

What Happens on Every PR

The moment a PR opens or updates, the Action executes a four-step process.

Step 1: Diff Extraction

The Action pulls the complete diff between your PR branch and the base branch. But it doesn’t stop at file-level changes. It parses the diff into change units — individual functions, imports, configuration values, and infrastructure definitions.

A 200-line PR that touches three files might produce eight change units: two function modifications, one new function, three import changes, one config value update, and one infrastructure definition change. Each change unit gets evaluated independently.

Why this granularity matters: a PR that adds a benign utility function AND modifies an authentication check shouldn’t be treated uniformly. The utility function is L0. The auth change is L3. Treating the whole PR as L3 creates review fatigue. Treating it as L0 misses the critical change. Change-unit granularity gets the classification right.

Step 2: Risk Tier Assignment

Each change unit gets classified into one of five risk tiers:

L0 - Trivial. Formatting, comments, whitespace, documentation. No functional impact. Zero risk of introducing bugs or security issues.

L1 - Low. Test additions, logging changes, minor refactoring that doesn’t alter behavior. Low risk. Evidence is generated but no review gate.

L2 - Medium. New features, bug fixes, dependency updates. Moderate risk. Worth reviewing but not blocking.

L3 - High. Authentication, authorization, cryptography, PII handling. Changes that affect security boundaries. Requires multi-model review.

L4 - Critical. Payment processing, financial calculations, infrastructure security, deployment configuration. Changes where errors have outsized consequences. Requires council review and human approval.

The classification runs against a policy file. The default policy covers the patterns that cause the most damage: auth middleware, token handling, encryption, database migration, payment routes, IAM policies, secrets management. You can customize the policy to add patterns specific to your codebase.

Step 3: Council Review

Multiple AI models review the change units independently. This is the key differentiator from single-model AI review tools. Independent reviewers catch different classes of issues.

Claude excels at logic errors and architectural concerns. GPT catches API misuse and common vulnerability patterns. Gemini flags dependency issues and compatibility problems. When three models all approve, confidence is high. When they disagree, the disagreement itself is valuable information.

The council votes on each finding. The consensus type depends on the risk tier:

- L0-L1: No council needed. Auto-approved with evidence.

- L2: MAJORITY consensus. Two out of three models must agree.

- L3: SUPERMAJORITY consensus. Two out of three with high confidence.

- L4: UNANIMOUS consensus required.

Step 4: Evidence Bundle

Every review produces an evidence bundle, regardless of the outcome. The bundle contains:

- The original diff (complete, not summarized)

- Each model’s independent review with findings

- The risk tier classification with rationale

- The council vote and consensus calculation

- Timestamps and model versions

- A SHA-256 hash chain linking this evidence to the repository’s history

The bundle is uploaded as a GitHub Actions artifact. It persists for 90 days by default (configurable). Anyone with repository access can download and verify it.

Configuration Options

Risk Tier Thresholds

with:

risk_threshold: L2

The risk_threshold determines which risk level triggers a blocking review. Set it to L2 and anything L2 or above gets reviewed. Set it to L3 and L2 changes flow through with evidence but without blocking.

Most teams start at L2. After a month of seeing the evidence quality and false positive rate, they either raise it to L3 (if false positives are too frequent) or keep it at L2 (if the signal is good).

Auto-Approve Level

with:

auto_approve: L1

Changes at or below this level get auto-approved with evidence. The evidence still gets generated and attached. The PR isn’t blocked. This is for the trivial changes that don’t warrant anyone’s attention but should still have an audit trail.

Model Selection

with:

models: claude-sonnet,gpt-4o,gemini-pro

Choose which models participate in the council. More models means more diverse review coverage but slower execution and higher API costs. Two models is the minimum for meaningful consensus. Three is the sweet spot. Four or more is for high-security environments.

Notification Channels

with:

notify_slack: ${{ secrets.SLACK_WEBHOOK }}

notify_channel: "#code-review"

When a PR triggers a review gate, notify your team. The notification includes the risk tier, the findings summary, and a link to the evidence bundle. The reviewer who needs to approve has the context before they open the PR.

Custom Policy File

with:

policy_file: .github/codeguard-policy.yml

Override the default risk classification with your own patterns. Add your internal service names, custom security annotations, or domain-specific risk patterns.

What the Evidence Looks Like

After a PR is reviewed, the Action posts a comment on the PR with a summary:

## CodeGuard Review

Risk Tier: L2 (Medium)

Council: 2/2 APPROVE (UNANIMOUS)

Evidence: [Download Bundle](link-to-artifact)

### Findings

- [INFO] New dependency added: lodash@4.17.21 (no known vulnerabilities)

- [INFO] Function `calculateDiscount` modified: logic change verified consistent with test cases

- [WARN] Error handling in `processPayment` catches generic Exception - consider specific error types

### Risk Classification

- src/utils/discount.ts: L1 (utility function modification)

- src/api/payment.ts: L2 (payment-adjacent code, no direct financial calculation)

- package.json: L1 (dependency addition, clean audit)

The PR comment is the summary. The evidence bundle is the full record. The comment helps the developer. The bundle helps the auditor.

What This Costs

API costs depend on your PR volume and model selection. A typical PR with a 200-line diff costs roughly:

- Claude Sonnet review: ~$0.02-0.05

- GPT-4o review: ~$0.03-0.06

- Two-model council: ~$0.05-0.11 per PR

A team of 10 developers averaging 5 PRs per day per developer spends roughly $25-55/month on AI review. That’s less than a single hour of a senior engineer’s time doing manual code review.

The GitHub Actions compute time is minimal — most of the execution is waiting for API responses. If you’re on a free GitHub plan, the Action runs within your monthly minutes allocation for most team sizes.

Webhook Adapter Integration

The GitHub Action is the entry point. For teams that want governance data flowing into other systems, the webhook adapter connects the evidence pipeline to your existing tools.

Configure a webhook URL and every evidence bundle gets POSTed to your endpoint:

with:

webhook_url: ${{ secrets.GOVERNANCE_WEBHOOK }}

webhook_events: review_complete,risk_escalation

Events you can subscribe to:

review_complete: Every completed review, regardless of outcomerisk_escalation: When a PR exceeds a risk thresholdcouncil_disagreement: When council models disagreeevidence_sealed: When a bundle is finalized

The webhook payload includes the full evidence bundle. Pipe it into your SIEM, your GRC platform, your compliance dashboard, or a Slack channel. The evidence flows where your existing workflows expect it.

Five Minutes to Governed

I keep coming back to the five-minute claim because it matters. The biggest barrier to adopting governance isn’t technical complexity or cost. It’s friction. If governance takes a week to set up, teams defer it. If it takes a sprint to configure, it gets deprioritized. If it requires infrastructure, it goes into the backlog.

Five minutes. One YAML file. Two API keys you already have. Push, and governance starts on the next PR.

The evidence quality improves over time as you tune the policy file and adjust the risk thresholds. But the baseline — risk-tiered, AI-reviewed, evidence-sealed PRs — starts on day one.

Every PR that flows through without governance is a gap in your audit trail. Every PR that flows through with the Action produces evidence that compounds. In six months, you have thousands of evidence bundles forming a complete governance record.

Start with one repository. The one that keeps you up at night. Five minutes from now, every PR in that repo produces audit-grade evidence.

Ready to try it? The Action is open source. If you want help configuring risk tiers for your specific codebase, book a call and I’ll walk you through the policy file.