CodeGuard: The First Guard Lane

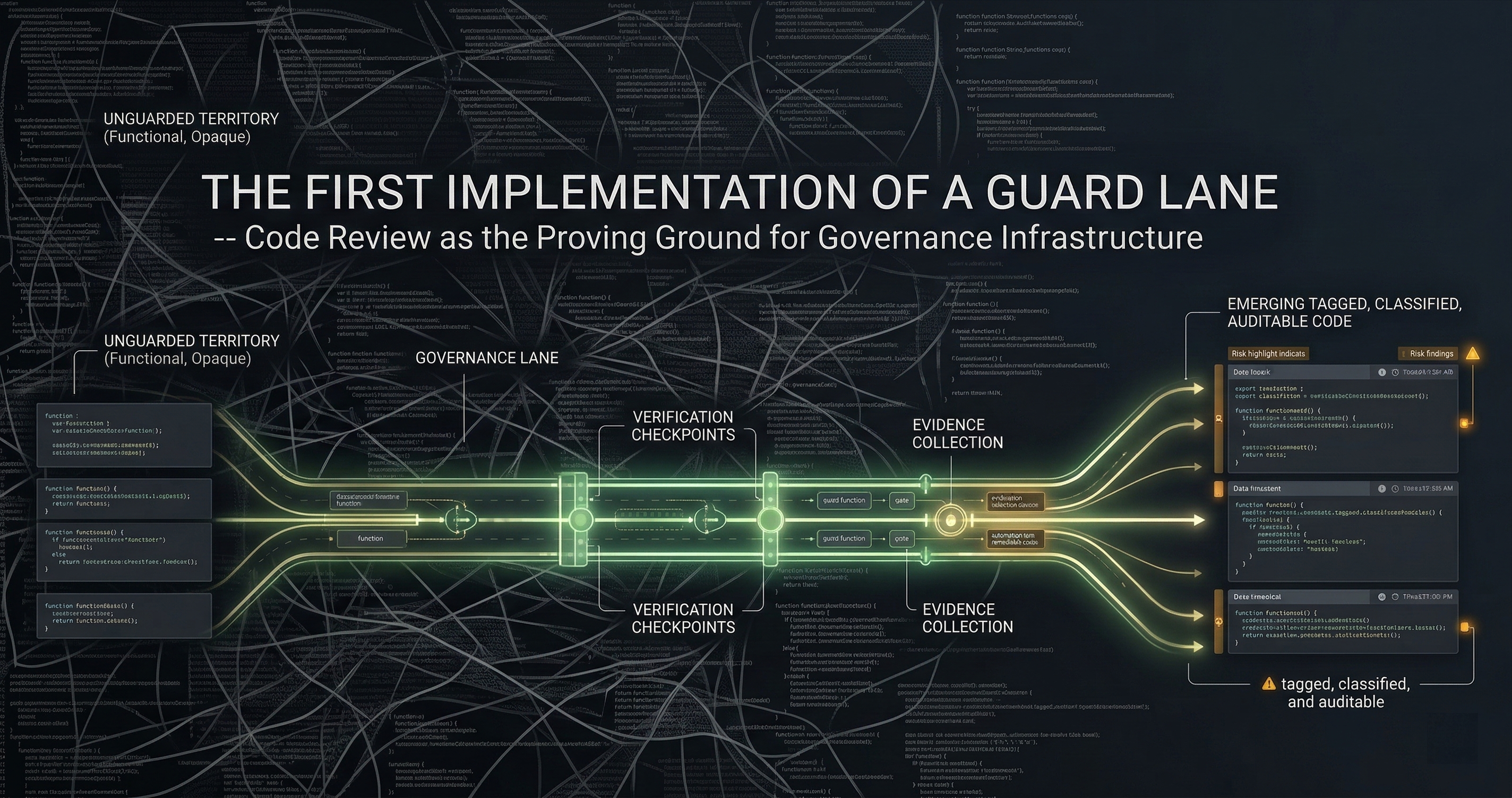

From risk tiers and AI councils to a working GitHub Action that produces audit-grade evidence as a side effect of code review.

I had risk tiers. I had the council. What I didn’t have was something a developer could install in 10 minutes and forget about. So I built a GitHub Action. Drop it in, and every PR gets triaged, reviewed by multiple AI models, and sealed into a tamper-evident evidence bundle. That’s when I realized: the review tool was producing audit evidence as a side effect.

The Gap Between Theory and Installation

I wrote about how vibe coding broke code review and about why PR approval is not evidence. Both posts described real problems. Neither shipped a fix.

The risk tier model was solid on paper. L0 through L4, from trivial formatting changes up to critical auth and payment logic. The multi-model council worked — three AI reviewers catching what one human couldn’t. But all of it lived in scripts and notebooks on my machine. Nobody else could use it.

The hard part was never the algorithm. It was the packaging.

What CodeGuard Actually Does

codeguard-action is a GitHub Action. You add it to any repo’s workflow file. When a PR opens, this is what happens:

Step 1: Diff extraction. The Action pulls the full diff and parses it into change units. Not just “which files changed” — which functions, which imports, which config values.

Step 2: Risk tier assignment. Each change unit gets classified. Auth middleware changes? L3 or L4. CSS tweaks? L0. Payment logic? L4, always. The classification runs against a policy file you can customize, but the defaults cover the patterns that matter: authentication, authorization, cryptography, PII handling, financial calculations, infrastructure config.

Step 3: Council review. Multiple AI models review the diff independently. This is the principle from the council architecture — diverse reviewers catch different classes of bugs. Claude spots logic errors. GPT catches API misuse. Gemini flags dependency issues. The council votes, and disagreements get flagged.

Step 4: Evidence bundle sealed. Every step — the raw diff, the risk classification rationale, each council member’s review, the final vote, the approval or escalation decision — gets captured into a single evidence bundle. SHA-256 hash chain. Tamper-evident.

Step 5: Routing. L0-L1 changes auto-approve. L2 gets a comment with the risk analysis. L3-L4 block the merge and require human sign-off. The human reviewer sees exactly what triggered escalation and why.

The whole thing runs in under 90 seconds for a typical PR.

The Hash Chain

This is the part that makes auditors pay attention.

Every event in the review pipeline gets a content hash. The diff content, the policy evaluation result, each council vote, the final decision — each one is hashed using SHA-256. Then those hashes are chained: each event’s hash includes the previous event’s hash as input. The functions in guardspine-kernel that do this — computeContentHash, buildHashChain, computeRootHash — are deliberately simple. Under 50 lines total.

The hashing uses RFC 8785 canonical JSON. This matters because JSON key ordering is not guaranteed. If you hash {"a":1,"b":2} and someone else hashes {"b":2,"a":1}, you get different hashes for identical data. RFC 8785 defines a deterministic serialization so the same logical content always produces the same hash. No ambiguity. No “it depends on your JSON library.”

The root hash of the chain is the fingerprint of the entire review. Change one byte of the diff, one word of a council review, one bit of the approval decision — the root hash changes. You can’t tamper with the middle of the chain without breaking everything downstream.

The Moment It Clicked

I was running CodeGuard on its own repository. Dogfooding. A PR came in that refactored some error handling — routine stuff, maybe L1. The Action ran, council reviewed, auto-approved, bundle generated.

Then I opened the bundle.

It contained the exact diff at the moment of review. The policy rules that fired. Three independent AI assessments with specific line-number citations. The vote tally. The auto-approval rationale. A hash chain proving none of it was modified after the fact.

I had built a review tool. But the artifact it produced was audit evidence. Not as a separate step. Not as an afterthought. The evidence was a natural byproduct of the review process itself.

This is the insight that shaped everything after: governance that produces evidence as a side effect of useful work is governance that actually gets adopted. Nobody installs a tool to generate compliance artifacts. People install tools that make their PR queue manageable. If that tool also produces cryptographically verifiable evidence? That’s the trick.

Offline Verification

Here is the principle I will not compromise on: anyone can verify a bundle without trusting my system.

pip install guardspine-verify

guardspine-verify bundle.zip

That command checks every hash in the chain, verifies the root hash, confirms the bundle structure matches the guardspine-spec Evidence Bundle Specification v0.2.0. It runs entirely offline. No API calls. No license check. No phone-home.

If I disappear tomorrow, the bundles are still verifiable. If you stop paying for GuardSpine, the evidence you already generated is still valid. If your auditor wants to verify independently without installing anything from my GitHub, they can reimplement the verification in 30 lines of Python — the spec is public.

This is the opposite of vendor lock-in. The evidence belongs to you because the math belongs to everyone.

Why a GitHub Action First

I could have built a SaaS dashboard. I could have built a VS Code extension. I could have built an API-first platform with a React frontend and a pricing page.

I built a GitHub Action because that’s where the work happens.

Developers don’t context-switch to a governance dashboard. They open PRs. If the governance layer lives inside the PR workflow, it runs every time without anyone remembering to invoke it. If it lives anywhere else, adoption drops to zero within two sprints.

The Action also solves the procurement problem. A GitHub Action doesn’t require a new vendor approval. It doesn’t need SSO integration. It doesn’t need a security review of a new SaaS dependency. It runs in your existing GitHub Actions runner, with your existing secrets, inside your existing CI/CD pipeline. An engineering manager can install it on a Friday afternoon and have evidence bundles generating by Monday morning.

737 tests pass on every commit. The Action handles edge cases — binary files, empty diffs, massive PRs that need chunking, repos with custom branch protection rules. I didn’t ship it until it was boring. The best infrastructure is the kind you forget is running.

What This Proves

CodeGuard is one guard lane. The first one. It answers a specific question: “Can you prove that this code change was reviewed, risk-classified, and approved through a documented process?”

The answer is now yes, with cryptographic proof, for any repo that installs a single workflow file.

But code review is one decision point in a much larger surface. Infrastructure changes, model deployments, data pipeline modifications, access control updates — they all have the same evidence gap. The same pattern applies: intercept the decision, classify the risk, run the council, seal the evidence, route to the right approver.

The guard lane architecture means each of those surfaces gets its own lane with its own policies, but shares the same evidence format, the same hash chain, the same verification tooling. One guardspine-verify command checks any bundle from any lane.

That’s the design. CodeGuard was the proof that it works.

The Numbers

Since shipping codeguard-action:

- 737 tests across the test suite

- L0-L4 risk tiers with customizable policy rules

- Sub-90-second review cycle for typical PRs

- Zero vendor dependencies for evidence verification

- One workflow file to install

The evidence bundles have already surfaced in conversations with compliance teams who previously answered auditor questions with screenshots of green checkmarks. The difference between “someone clicked approve” and “here is a cryptographically sealed record of what was reviewed, by whom, with what risk classification” is the difference between a finding and a clean audit.

Install CodeGuard on any repo in under 10 minutes. codeguard-action on GitHub: https://github.com/DNYoussef/codeguard-action

Want to see how evidence bundles work on your actual codebase? Book a free 30-minute walkthrough.