Anti-Hollowing: Why AI Governance Must Preserve Human Judgment

Your team shipped 3x more code this quarter thanks to AI. Can they still evaluate whether the code is correct? If you're not measuring that, you're not governing.

Your team shipped 3x more code this quarter thanks to AI. Can they still evaluate whether the code is correct? If you’re not measuring that, you’re not governing — you’re coasting.

I watch this pattern play out in every team that adopts AI coding tools without governance. Velocity goes up. Confidence goes up. The ability to evaluate what they’re shipping goes down. Slowly. Invisibly. Until something breaks and nobody on the team can diagnose why.

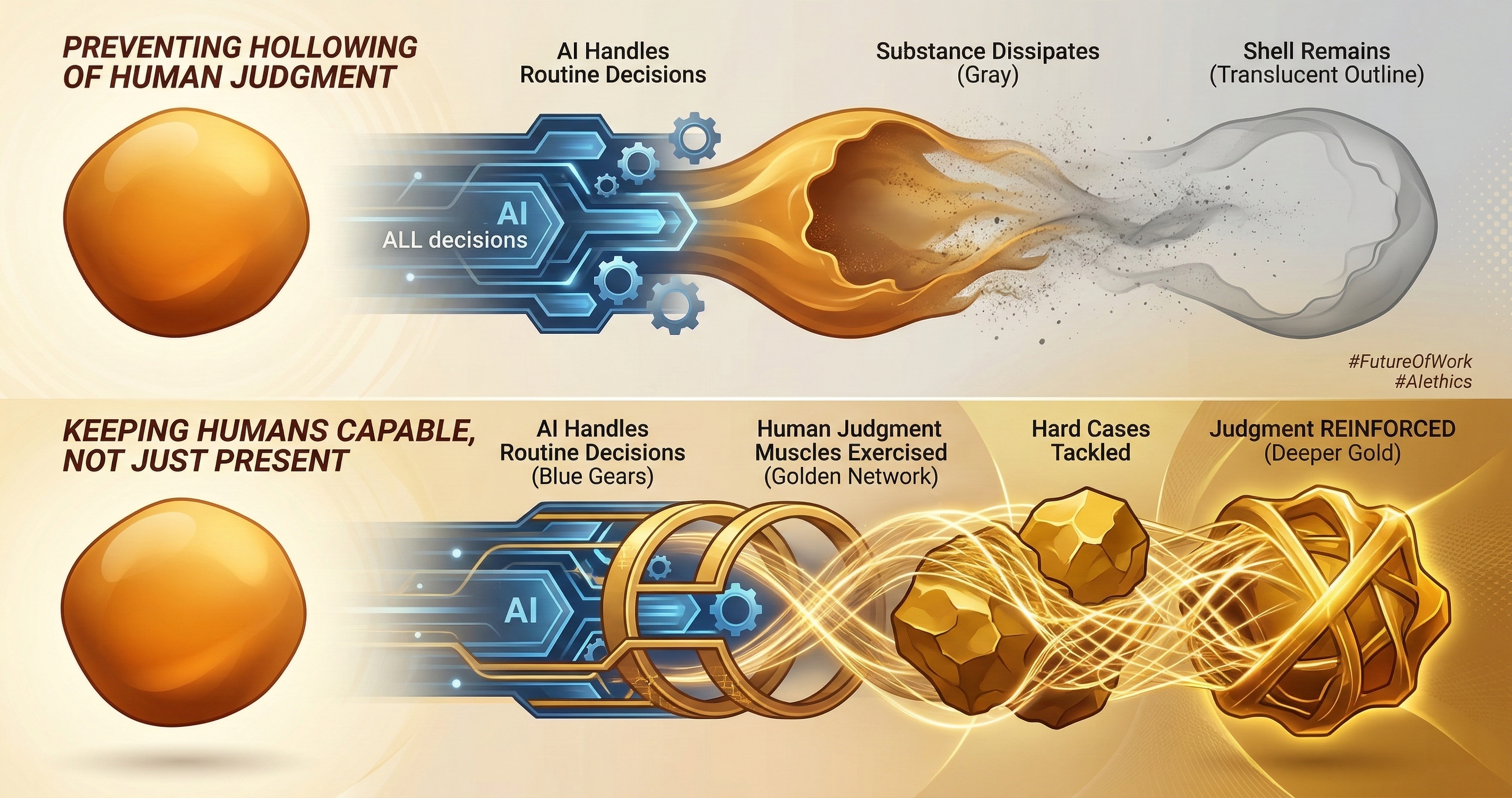

That’s hollowing.

What Hollowing Looks Like

A junior developer joins the team. They use AI for everything. Code generation, debugging, test writing, documentation. Their output is high. Their PR descriptions are clear. Their code passes review. By every metric, they’re productive.

Six months later, the AI service goes down for four hours. The developer can’t write a database query without assistance. They can’t trace a stack trace to root cause. They can’t evaluate whether a proposed fix is correct because they never built the mental model of how the system works.

This isn’t the developer’s fault. It’s a system design failure. The organization optimized for output and forgot to invest in judgment.

Hollowing isn’t new. It happened with calculators — math intuition declined. It happened with GPS — spatial navigation skills declined. It happened with spell-checkers — spelling accuracy declined. The pattern is consistent: when a tool handles a cognitive task, the human skill for that task atrophies.

With AI coding tools, the cognitive task that atrophies is the most critical one: the ability to evaluate whether code is correct.

Output Without Judgment

AI creates output. It does not create judgment. These are different things.

Output is the code, the document, the analysis. Judgment is the ability to evaluate whether the output is right, relevant, and safe. A model can generate a database migration that runs without errors but introduces a subtle data integrity issue. The output is functional. The judgment to catch the integrity issue requires understanding the data model, the business rules, and the downstream consumers.

When a developer writes code manually, they build judgment as a side effect. They encounter errors, trace them, fix them, and internalize the patterns. The struggling is the learning. Remove the struggling and you remove the learning.

AI removes the struggling. That’s the value proposition — you get output without the pain of producing it. But the pain was producing something else too: the judgment to evaluate output.

The governance question is: how do you get AI-speed output while preserving human-speed judgment?

The 10-15% Reinvestment Rule

I propose a simple rule: reinvest 10-15% of the time AI saves into judgment-building activities. If AI saves your team 20 hours per week, spend 2-3 of those hours on activities that build and maintain the ability to evaluate AI output.

This isn’t a suggestion. It’s a governance requirement. Like allocating budget for security or maintenance, allocating time for judgment preservation is a cost of operating AI-assisted systems safely.

Four categories of reinvestment:

Training Judgment

Structured exercises where developers evaluate AI output without implementing it. The AI generates a solution. The developer’s job is to find what’s wrong with it. Not to fix it. Not to ship it. To evaluate it.

This inverts the normal workflow. Instead of “AI generates, human ships,” it’s “AI generates, human evaluates.” The evaluation builds the mental models that atrophy when you only ship.

Concrete exercise: take a real PR from your codebase. Have the AI generate an alternative implementation. Have the developer evaluate both and explain which is better and why. The explanation is the judgment. If the developer can’t articulate why one is better, they can’t evaluate AI output reliably.

Cross-Training Redundancy

No single person should be the only one who understands a system. AI amplifies the single-point-of-failure risk because it lets individuals work on systems they don’t fully understand.

Cross-training means rotating developers through different systems. Not permanently. Periodically. One sprint every quarter in a different part of the codebase. The developer builds enough understanding to evaluate AI output for that system.

Without cross-training, you end up with bus factor 1 on critical systems where the “understanding” is actually just knowing which prompts produce working code. That’s not understanding. That’s prompt memorization.

Recovery Capability

Can your team function if AI tools are unavailable for a week? Not “can they be productive” but “can they ship critical fixes and maintain system uptime?”

Recovery capability means maintaining the baseline skills to operate without AI. Annual or quarterly exercises where the team works without AI tools for a defined period. Not because AI tools are bad, but because dependency on any single tool is a risk.

Fire drills test your ability to respond to fires. AI-off drills test your ability to function when AI is unavailable. Both are uncomfortable. Both reveal gaps you’d rather not have discovered during a real emergency.

Sustainability Metrics

Measure judgment, not just output. Track metrics that indicate whether your team’s ability to evaluate code is improving or declining.

Review accuracy. When developers review AI-generated code, how often do they catch bugs that the AI introduced? If the catch rate is declining, judgment is eroding.

Debug time. When production issues occur, how long does it take to diagnose the root cause? If debug time is increasing despite AI assistance, diagnostic skills are atrophying.

Explanation quality. Can developers explain their system’s architecture and design decisions? If explanations are becoming shallower (“the AI suggested it and it works”), understanding is hollowing.

Manual capability. When AI tools are unavailable, how much does productivity drop? Some drop is expected. A 90% drop indicates dangerous dependency.

GuardSpine Counter-KPIs

Traditional KPIs measure output: lines of code, PRs merged, features shipped, velocity points. These metrics all go up with AI adoption. They all look good in quarterly reviews. They all miss hollowing.

Counter-KPIs measure the things that output metrics don’t capture:

Judgment Ratio. The percentage of AI-generated code that a human can explain in detail. Target: 80%+. If developers are shipping code they can’t explain, the output is ungoverned regardless of how many reviews it passed.

Recovery Score. Performance during AI-off drills as a percentage of normal performance. Target: 40%+ (some drop is expected and acceptable). Below 30% indicates critical dependency.

Catch Rate. The percentage of intentionally seeded bugs in AI output that reviewers find. Run periodic exercises where known bugs are introduced. Measure whether the review process catches them. Target: 70%+.

Cross-System Competency. The number of team members who can independently diagnose and fix issues in each critical system. Target: 3+ per system. If only one person can debug the auth system, you have a bus factor problem.

Reinvestment Utilization. The percentage of AI-saved time actually spent on judgment-building activities. Target: 10-15%. If the team saves 20 hours and spends all 20 on more output, the hollowing accelerates.

These counter-KPIs go into the evidence bundle. When a governance audit asks “how do you ensure your team can evaluate AI output?” the counter-KPI trend is the answer.

”AI Proposes, Humans Dispose”

The phrase captures the correct relationship. AI generates options. Humans evaluate and decide. The deciding is the governing.

When this relationship inverts — when humans rubber-stamp AI output because they can’t evaluate it — you have a governance failure. Not because the AI is wrong (it’s right most of the time), but because the ability to detect when it’s wrong has atrophied.

Governance isn’t about preventing AI from making mistakes. AI will make mistakes. Governance is about ensuring that humans can detect those mistakes before they cause damage.

The detection capability is the judgment. If you’re not investing in judgment, you’re not governing. You’re hoping that the AI is right. Hope is not a governance strategy.

The Compounding Risk

Hollowing compounds. Each month of ungoverned AI usage erodes judgment slightly. The erosion is invisible because output metrics keep improving. By the time someone notices, the judgment gap is significant.

Year one: AI handles 30% of code. Developers still understand most of what they ship. Review accuracy is high. Manual capability is 70% of baseline.

Year two: AI handles 60% of code. Developers understand the patterns but not the details. Review accuracy drops to 50%. Manual capability is 40% of baseline.

Year three: AI handles 80% of code. Developers can prompt effectively but can’t evaluate output independently. Review accuracy drops to 25%. Manual capability is 15% of baseline. The team is dependent in a way that looks productive but is structurally fragile.

This is the compounding. Each year of under-investment in judgment makes the next year’s gap harder to close. The developers who could have mentored the team have also lost skills. The institutional knowledge is gone, not deprecated — erased through disuse.

The 10-15% reinvestment rule prevents this compounding. It’s not a perfect solution. It’s a floor. The minimum investment required to maintain the ability to evaluate what you ship.

Implementing Anti-Hollowing

Start with measurement. Run a judgment baseline assessment. Have each developer evaluate a set of AI-generated code changes (with known issues seeded). Measure their catch rate. This is your starting point.

Establish the counter-KPIs. Add them to your quarterly review alongside the output metrics. When a manager reports “velocity up 3x,” the counter-KPIs provide the other half of the story: “judgment holding steady” or “judgment declining.”

Allocate the reinvestment time. Put it on the calendar. Not as optional learning time that gets consumed by feature work. As a recurring, non-negotiable block. Two hours per week, minimum.

Build the exercises. AI output evaluation sessions. Cross-training rotations. Quarterly AI-off drills. These aren’t busywork. They’re the maintenance cost of operating AI-assisted systems responsibly.

Record the evidence. Every reinvestment activity produces evidence that goes into the governance record. When an auditor asks “how do you ensure your team can evaluate AI output?” the evidence is there. Not a policy document. Not a training slide. Measured results from structured exercises.

The Hard Truth

Your team is probably already hollowing. If you adopted AI coding tools more than six months ago without anti-hollowing measures, the erosion has started. The developers who joined after AI adoption may never have built the judgment skills that senior developers take for granted.

This isn’t a reason to stop using AI. The productivity gains are real. The competitive advantage is real. But the gains are only sustainable if you invest in the ability to evaluate what you’re producing.

AI makes code cheap. It doesn’t make judgment cheap. Judgment still comes from experience, from struggling with problems, from building mental models through repeated exposure. The 10-15% reinvestment ensures that this judgment-building continues even as AI handles more of the output.

Your competitors will ship faster with AI. Some of them will hollow out their teams in the process. When the first major incident hits — and it will — the teams with judgment will recover. The teams without it will founder.

Anti-hollowing isn’t a nice-to-have. It’s the difference between using AI as a tool and being used by it.

Worried about hollowing on your team? Book a call and I’ll walk you through the judgment baseline assessment. Thirty minutes to know where you stand.