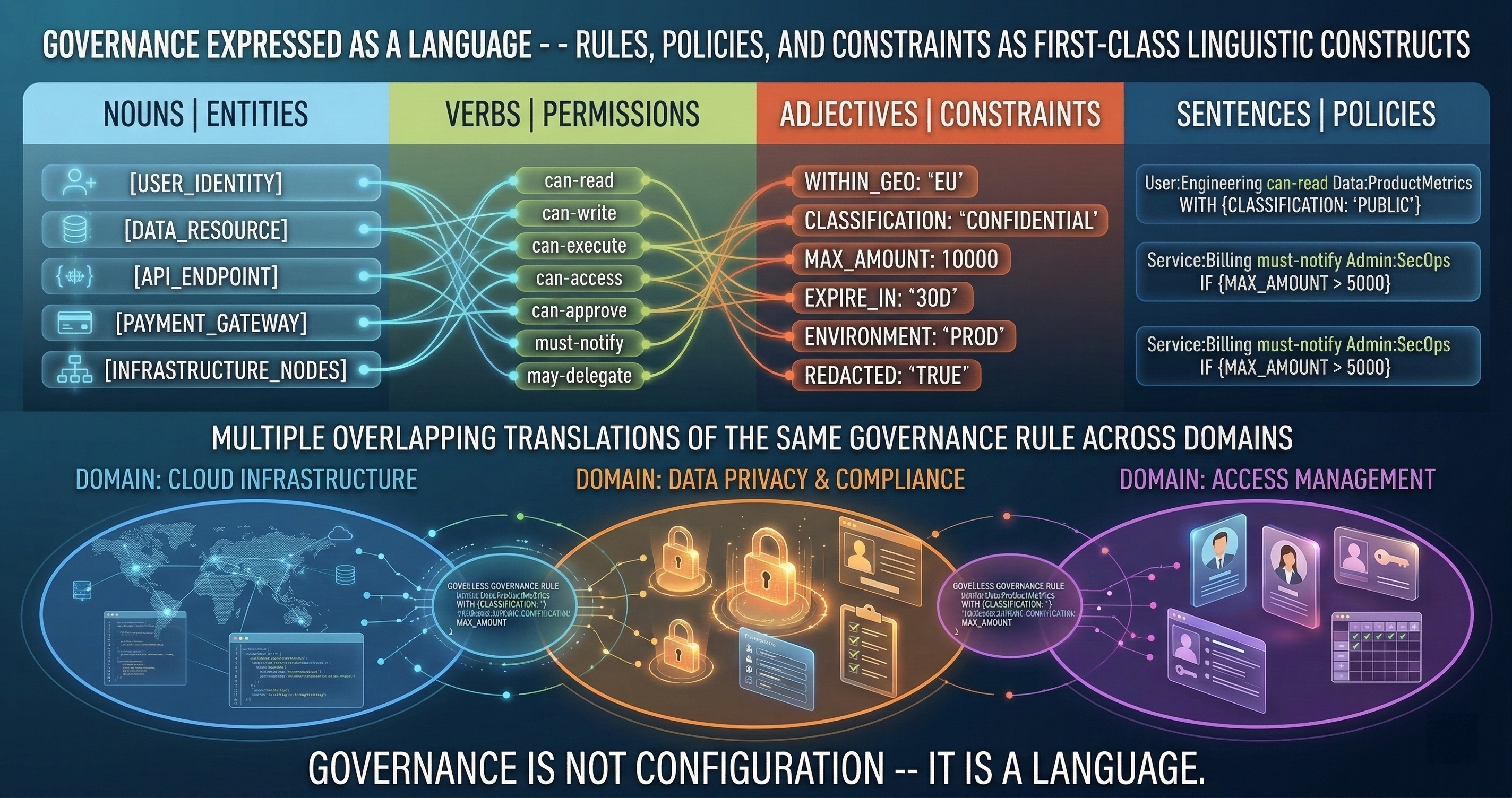

Nomotic Mode: Governance as a Language

Chris Hood spent years writing the philosophy. I spent years building the infrastructure. In April 2026, the book launches. Here's how the theory becomes code.

Chris Hood spent years writing the philosophy. I spent years building the infrastructure. In April 2026, the book launches. Here’s how the theory becomes code.

Most governance systems start with rules and work backward toward theory. We went the other direction. The theory came first — a formal framework for how authority, delegation, and accountability should work in AI-augmented systems. The infrastructure implements that theory. Not approximately. Precisely.

The Theory-Infrastructure Bridge

Chris Hood’s work asks a fundamental question: when an AI system acts on behalf of a human, what governance structure ensures the action is authorized, bounded, and accountable?

This isn’t a new question for human organizations. Every corporation, military unit, and government agency has answered it with hierarchies, delegation frameworks, and accountability structures. But those answers assume the agent following orders is human — capable of judgment, subject to social pressure, and slow enough for oversight.

AI agents are none of those things. They execute instantly, lack judgment (they have pattern matching, which is different), and don’t respond to social pressure. The governance structures that work for human agents fail when the agent is a model running in a context window.

Nomotic theory provides the formal framework for governing non-human agents. GuardSpine provides the runtime that enforces it.

What “Nomotic” Means

The term comes from the Greek “nomos” — law, custom, convention. Nomotic governance is governance that operates through formal rules rather than informal norms.

In human organizations, informal norms do most of the work. “Don’t ship on Friday” isn’t written in any policy document, but most teams follow it. “Check with Sarah before changing the auth middleware” is tribal knowledge, not a rule.

AI agents can’t follow informal norms. They don’t know who Sarah is. They don’t know that Friday deployments are risky. They follow whatever is in the system prompt, and even that degrades as the context window fills.

Nomotic governance encodes informal norms as formal rules. Not as suggestions in a prompt. As structural constraints that the runtime enforces.

Nomotic Mode v1: The YAML Specification

Nomotic Mode is a YAML-based governance semantics encoding. It defines four categories of governance rules.

Authority Boundaries

Who is authorized to do what, and under which conditions:

nomotic:

authority:

- principal: "senior-engineer"

scope:

- action: "approve_merge"

conditions:

risk_tier: ["L0", "L1", "L2"]

- action: "approve_merge"

conditions:

risk_tier: ["L3", "L4"]

requires: "second_approver"

- principal: "ai-reviewer"

scope:

- action: "review"

conditions:

risk_tier: ["L0", "L1", "L2", "L3", "L4"]

- action: "approve_merge"

conditions:

risk_tier: ["L0", "L1"]

requires: "council_consensus"

The AI reviewer can review anything but can only approve L0-L1 changes, and only when the council reaches consensus. A senior engineer can approve up to L2 alone but needs a second approver for L3-L4. These aren’t guidelines. They’re runtime constraints.

Conditional Requests

Rules that apply based on context:

nomotic:

conditionals:

- when:

file_pattern: "src/auth/**"

change_type: "modify"

then:

risk_tier_floor: L3

require_council: true

notify: ["security-team"]

- when:

time_of_day: "after_17:00"

day_of_week: ["friday"]

then:

block_merge: true

message: "No merges after 5 PM on Friday. Queued for Monday review."

The first rule says: any modification to auth code is automatically L3 or higher, requires council review, and notifies the security team. The second rule encodes the “no Friday deploys” norm as a structural constraint.

These conditionals run at merge time. The AI doesn’t need to remember them. The runtime checks them. If the AI tries to approve a Friday evening merge, the conditional blocks it. The AI’s opinion doesn’t matter. The rule does.

Stop-the-Line Rules

Absolute boundaries that cannot be overridden:

nomotic:

stop_the_line:

- condition: "secrets_detected"

action: "block"

message: "Hardcoded secrets detected. Remove before merge."

override: false

- condition: "risk_tier_l4_no_human"

action: "block"

message: "L4 changes require human approval."

override: false

- condition: "council_quorum_not_met"

action: "block"

message: "Council quorum not met. Cannot proceed with review."

override: "security-lead"

Stop-the-line rules are the hardest rules in the system. Secrets in code? Blocked. No human approval on L4? Blocked. These don’t have a “well, but this one time…” escape hatch. The override: false means nobody can override. The override: "security-lead" means exactly one role can override, and the override is recorded in the evidence bundle.

This is where nomotic governance differs most from policy documents. A policy says “don’t commit secrets.” A stop-the-line rule prevents it structurally. The difference between “should” and “cannot.”

Governed Adaptation

Rules about how the rules themselves can change:

nomotic:

adaptation:

- rule_category: "authority_boundaries"

change_requires:

approval: "governance-committee"

council_review: true

evidence_bundle: true

cooling_period_hours: 72

- rule_category: "stop_the_line"

change_requires:

approval: "cto"

second_approval: "security-lead"

evidence_bundle: true

cooling_period_hours: 168

Rules about rules. Changing an authority boundary requires governance committee approval, council review, and a 72-hour cooling period. Changing a stop-the-line rule is even harder: CTO approval, security lead second approval, and a week-long cooling period.

This prevents governance erosion — the slow process where rules get relaxed because someone found them inconvenient. The inconvenience is intentional. The friction is the point.

The Nomotic Interrupt

When a governance boundary is violated, the system fires a nomotic interrupt. This is distinct from a normal error. An error means something went wrong. A nomotic interrupt means something went right — the governance system caught a boundary violation.

The interrupt includes:

interrupt:

type: "authority_violation"

principal: "ai-reviewer"

attempted_action: "approve_merge"

context:

risk_tier: "L3"

file: "src/auth/middleware.ts"

rule_violated: "authority.ai-reviewer.approve_merge.L3"

timestamp: "2026-03-24T14:30:00Z"

resolution_options:

- "escalate_to_senior_engineer"

- "request_council_review"

- "block_and_notify"

The interrupt tells you exactly what happened, which rule was violated, and what the available resolutions are. It doesn’t silently swallow the violation. It doesn’t log it and move on. It stops the process and demands resolution.

In an n8n workflow, nomotic interrupts route to handler nodes. In a CI/CD pipeline, they block the merge and notify the appropriate authority. In a standalone review, they produce a BLOCKED evidence bundle with the violation details.

Why Philosophy Matters

Engineers often dismiss philosophical frameworks as impractical. I thought the same thing before working with Chris. Then I tried building governance without a formal framework and discovered that I was re-inventing concepts that philosophy had already named and defined.

Authority delegation. I built a permission system. Chris pointed out I was implementing a delegation chain that political philosophy has formalized for centuries. The formal model handles edge cases my ad hoc permissions missed.

Accountability gaps. I built audit trails. Chris pointed out that an audit trail without a formal accountability structure is just a log file. Who is accountable when an AI acts on a human’s behalf? The formal model defines the accountability chain explicitly.

Governance decay. I noticed that teams relax rules over time. Chris pointed out that this is a well-studied phenomenon in institutional governance. The formal model includes governed adaptation specifically to prevent uncontrolled decay.

The book — launching in April 2026 — develops these concepts in full. The infrastructure — GuardSpine with Nomotic Mode — implements them.

Theory to Infrastructure Mapping

| Nomotic Concept | GuardSpine Implementation |

|---|---|

| Authority Boundary | Risk tier + role-based approval gates |

| Delegation Chain | AI reviewer -> council -> human approver evidence trail |

| Conditional Authority | YAML conditionals evaluated at runtime |

| Stop-the-Line | Hard blocks with no override (or named-role override) |

| Governed Adaptation | Meta-rules with cooling periods and multi-approval |

| Nomotic Interrupt | Workflow interrupt with resolution routing |

| Accountability Record | Evidence bundle with hash chain |

Every philosophical concept maps to a concrete implementation. The philosophy isn’t decoration. It’s the specification.

What This Means for Your Organization

If you’re building AI systems that make decisions, modify artifacts, or act on behalf of humans, you have governance requirements whether you’ve written them down or not.

Nomotic Mode gives you a language to write them down precisely. Not as a policy document that lives on a wiki. As a YAML file that the runtime enforces.

The gap between “what should happen” and “what does happen” closes when governance is structural instead of aspirational. Policy documents describe intentions. Nomotic Mode encodes them as constraints.

When Chris’s book launches in April, the philosophy will be publicly available. The infrastructure is available now. Together, they’re a complete answer to the question: how do you govern AI systems that act on your behalf?

Not with guidelines. Not with best practices. With formal rules that the system enforces regardless of whether the AI remembers them.

Want to encode your governance requirements in Nomotic Mode? Book a call and bring your current governance policies. I’ll show you how they translate to YAML constraints that the runtime actually enforces.