Offline Verification: Why Auditors Don't Need to Trust Us

Disconnect from the internet, hand the output to a third-party auditor who has never heard of your vendor, and ask them to verify the evidence is intact. If they cannot, you do not have governance.

Here is a test for your governance tool: disconnect from the internet, hand the output to a third-party auditor who has never heard of your vendor, and ask them to verify the evidence is intact. If they cannot, you do not have governance — you have vendor trust.

I have sat through SOC 2 audits where the auditor asked for evidence of our change control process. We showed them our ticketing system, our PR approvals, our deployment logs. The auditor nodded and moved on.

But what did the auditor actually verify? That we had a system. That the system contained entries. That the entries looked plausible. The auditor did not independently verify that the entries were authentic, unmodified, or complete. The auditor trusted that our systems told the truth.

That is the standard practice. And it is fragile.

The Trust Problem

Every governance tool I evaluated before building GuardSpine had the same architecture: you send your data to their API, their server processes it, their server returns a result, and you trust the result.

Think about what “trust the result” means in this context:

- You trust that their API received the correct data.

- You trust that their processing logic is correct.

- You trust that their storage has not been modified.

- You trust that their API returns the authentic result.

- You trust that nobody at the vendor modified the records.

- You trust that the vendor will continue to exist when you need to retrieve the evidence.

Each of these is a point of failure. Not a theoretical point of failure — a practical one. Vendors get acquired. APIs get deprecated. Databases get migrated with bugs. Employees make mistakes. Companies go bankrupt.

And here is the fundamental contradiction: if you need a governance tool because you cannot trust that AI-assisted changes are correct without verification, why would you trust that the governance tool’s output is correct without verification?

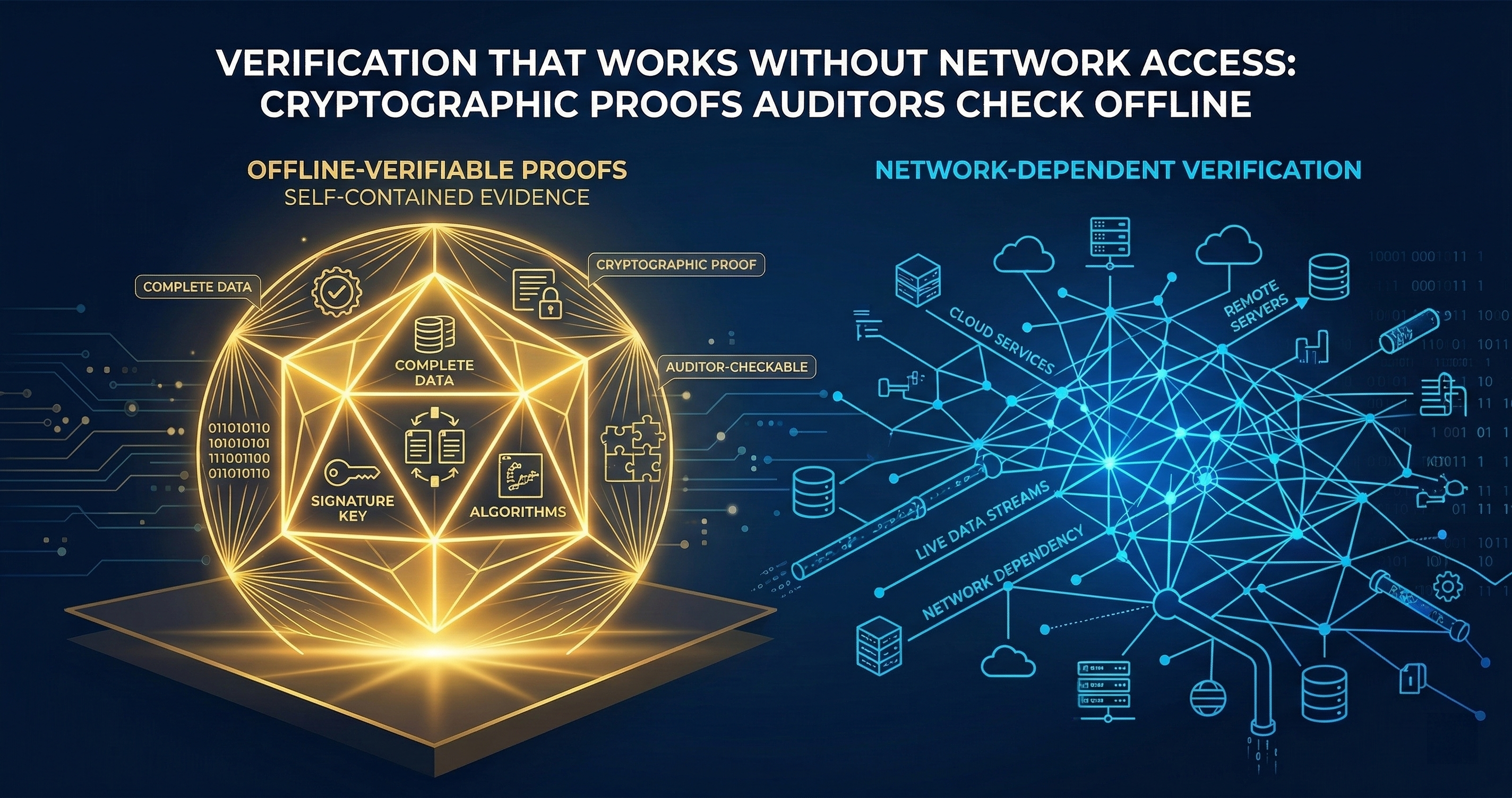

The Offline Verification Design

GuardSpine resolves this contradiction by making verification independent of GuardSpine.

An evidence bundle is a self-contained JSON file. It contains all the evidence (diffs, policy evaluations, review decisions), the cryptographic proof of integrity (hash chain, root hash), and the authenticity proof (signatures with embedded public keys).

To verify a bundle, you need:

- A JSON parser.

- A SHA-256 implementation.

- A signature verification library for the algorithm used (Ed25519, RSA-SHA256, ECDSA-P256, or HMAC-SHA256).

- Knowledge of RFC 8785 canonical JSON serialization.

You do not need:

- A network connection.

- A GuardSpine account.

- A GuardSpine API key.

- A GuardSpine license.

- The GuardSpine software installed.

- Contact information for anyone at GuardSpine.

The bundle carries everything an independent verifier needs. That is not an accident. That is the core design constraint.

The Five-Step Verification Algorithm

I covered this in the evidence bundles post, but it bears repeating in the context of why it matters for auditors.

Step 1: Verify content hashes. For each evidence item, take the content field, serialize it with RFC 8785, compute SHA-256, and compare to the stored content_hash. This proves the evidence content has not been modified since the bundle was created.

If this step fails, someone changed the evidence after the bundle was sealed. The auditor knows exactly which item was tampered with.

Step 2: Verify the hash chain. Starting from the genesis sentinel (index 0, all-zero content hash, chain hash = “genesis”), recompute each chain hash as SHA-256(previous_chain_hash + current_content_hash). Compare to the stored chain hashes.

If this step fails, items were inserted, deleted, or reordered after sealing. The auditor knows exactly where the chain breaks.

Step 3: Verify the root hash. Compute SHA-256(final_chain_hash + item_count) and compare to the stored root_hash.

If this step fails, either the chain was modified (caught in step 2) or the item count was changed (someone tried to add or remove items and recompute the chain). The count check catches truncation attacks.

Step 4: Verify signatures. For each signature in signatures[], verify it against the root hash using the specified algorithm and the embedded public key.

If this step fails, either the root hash was modified after signing (caught in step 3) or the signature was forged (which requires the private key).

Step 5: Return the result. Pass or fail. No ambiguity. No “partially valid.” No “valid with warnings.” The math works or it does not.

No License Keys

This one is simple but important. There is no license check in the verification path. No phone-home. No telemetry. No usage tracking.

Our guardspine-verify CLI does not check whether you have a license, whether your license is current, whether you are an authorized user, or whether you have exceeded a verification quota. It reads a JSON file, does math, and prints the result.

If we went out of business tomorrow, every evidence bundle ever created would still be verifiable. The CLI is open source (Apache 2.0). The algorithm is documented. The test vectors are public.

I have seen vendors whose “compliance evidence” becomes inaccessible when the contract lapses. That is not evidence. That is a hostage situation.

No API Calls

The verification process makes zero network requests. No DNS lookups. No HTTP calls. No certificate revocation checks against our servers. No timestamp validation against an NTP server we control.

Why does this matter? Because network-dependent verification has a threat model problem: the network is a potential attack surface. If verification requires calling our API, someone who compromises our API can make tampered bundles verify as valid. If verification is purely local computation, the only attack surface is the verifier’s own machine and the cryptographic primitives.

It also matters practically. Air-gapped environments exist. Classified networks exist. Regulated environments that prohibit outbound connections from audit workstations exist. If your governance tool requires internet connectivity to verify evidence, it cannot be used in these environments.

No Telemetry

The guardspine-verify CLI does not report what bundles you verified, when you verified them, how many you verified, or whether they passed or failed. We do not know. We cannot know. The code does not contain telemetry of any kind.

Audit activities should not be observable by the entity being audited. If we could see which bundles auditors are verifying, we could theoretically infer which changes are under scrutiny and prepare accordingly. The absence of telemetry is a security property, not just a privacy feature.

What an Auditor Actually Does

Here is the workflow for a third-party auditor who has never heard of GuardSpine:

Day 1: The organization hands the auditor a USB drive containing evidence bundle JSON files and a copy of the guardspine-verify binary (or the source code, if the auditor prefers to compile it themselves).

Day 1, hour 2: The auditor reads the verification algorithm documentation. It is five steps, each described in one paragraph. The auditor understands it.

Day 1, hour 3: The auditor runs guardspine-verify on a sample bundle. It passes. The auditor inspects the output, cross-references the content hashes manually for one item, confirms the math checks out.

Day 1, hour 4: The auditor writes a script to batch-verify all bundles on the USB drive. Every bundle either passes or fails. The auditor investigates any failures.

Day 2: The auditor examines the content of the bundles. Each bundle contains the actual evidence: what changed (diffs), what policies evaluated (policy-eval items), who reviewed (approval items), what they said (rationale items). The auditor can read every decision that was made and why.

Day 3: The auditor writes their report. They can state with confidence: “We independently verified the integrity of N evidence bundles covering the audit period. Each bundle’s hash chain, root hash, and signatures were validated using [algorithm]. The evidence within each bundle documents the change, risk classification, policy evaluation, and review decision for each governed change.”

Compare this to the typical audit workflow: “We logged into the vendor’s dashboard and observed that their system contained records of N changes. We relied on the vendor’s representations regarding the integrity and completeness of these records.”

The difference is the difference between evidence and testimony.

The Trust Hierarchy

Let me be precise about what you are trusting when you verify a GuardSpine evidence bundle:

You trust SHA-256. Specifically, you trust that SHA-256 is collision-resistant — that nobody can find two different inputs that produce the same hash. This is a well-studied property of a well-studied algorithm. If SHA-256 is broken, you have bigger problems than governance.

You trust the signature algorithm. Ed25519, RSA-SHA256, ECDSA-P256 — these are standard, audited, widely deployed algorithms. You are already trusting them for TLS, SSH, and code signing.

You trust the signer’s private key was not compromised. This is the real trust boundary. If the private key used to sign the bundle is stolen, an attacker can create forged bundles. Key management matters. HSMs help. Key rotation helps. But this is a well-understood problem with well-understood mitigations.

You trust RFC 8785 canonical JSON. A specification with a test suite, independent implementations, and deterministic behavior. Low risk.

Notice what is not in this list: you do not trust GuardSpine. You do not trust our servers. You do not trust our employees. You do not trust our business continuity. You do not trust our software (if you use your own verifier).

The trust is in the math. The math does not have opinions, outages, or quarterly earnings targets.

Building Trust Gradually

You do not have to adopt offline verification all at once. Here is a progression:

Week 1: Install the GitHub Action. Let it run. Read the evidence bundles it produces. Get comfortable with the format.

Week 2: Run guardspine-verify on a few bundles manually. Confirm that the output matches what you expect.

Week 3: Write a script that pulls evidence bundles from your CI artifacts and batch-verifies them. Set up an alert if any bundle fails verification.

Week 4: Share the verification documentation with your security team. Ask them to verify a bundle independently, without using our tools.

Month 2: Include evidence bundles in your next audit. Let the auditor verify them offline.

By month 2, you have a governance trail that does not depend on us. If you decide to switch to a different governance tool, your historical evidence still verifies. If we raise our prices, your audit trail still works. If we cease to exist, your compliance posture is unchanged.

That is what “vendor-independent governance” actually means. Not “our vendor says they are independent.” Independent by construction. Verifiable by anyone. Trustworthy because the math is trustworthy.

The Real Question

The real question is not “should I use offline verification?” The real question is “why would I accept governance evidence I cannot independently verify?”

If your current governance tool produces output that only their dashboard can interpret, ask them why. If the answer involves “proprietary algorithms” or “our secure cloud infrastructure,” ask what happens when the contract expires. If the answer is “you lose access to your compliance evidence,” you now know the real product you are buying: not governance, but continued access to your own audit trail.

Evidence that you can only access through the vendor is not your evidence. It is their evidence, about you, that they let you see. There is a difference.

Book a call if you want to see offline verification in action with your own data. Bring your auditor if you want — they will understand the algorithm in 30 minutes.