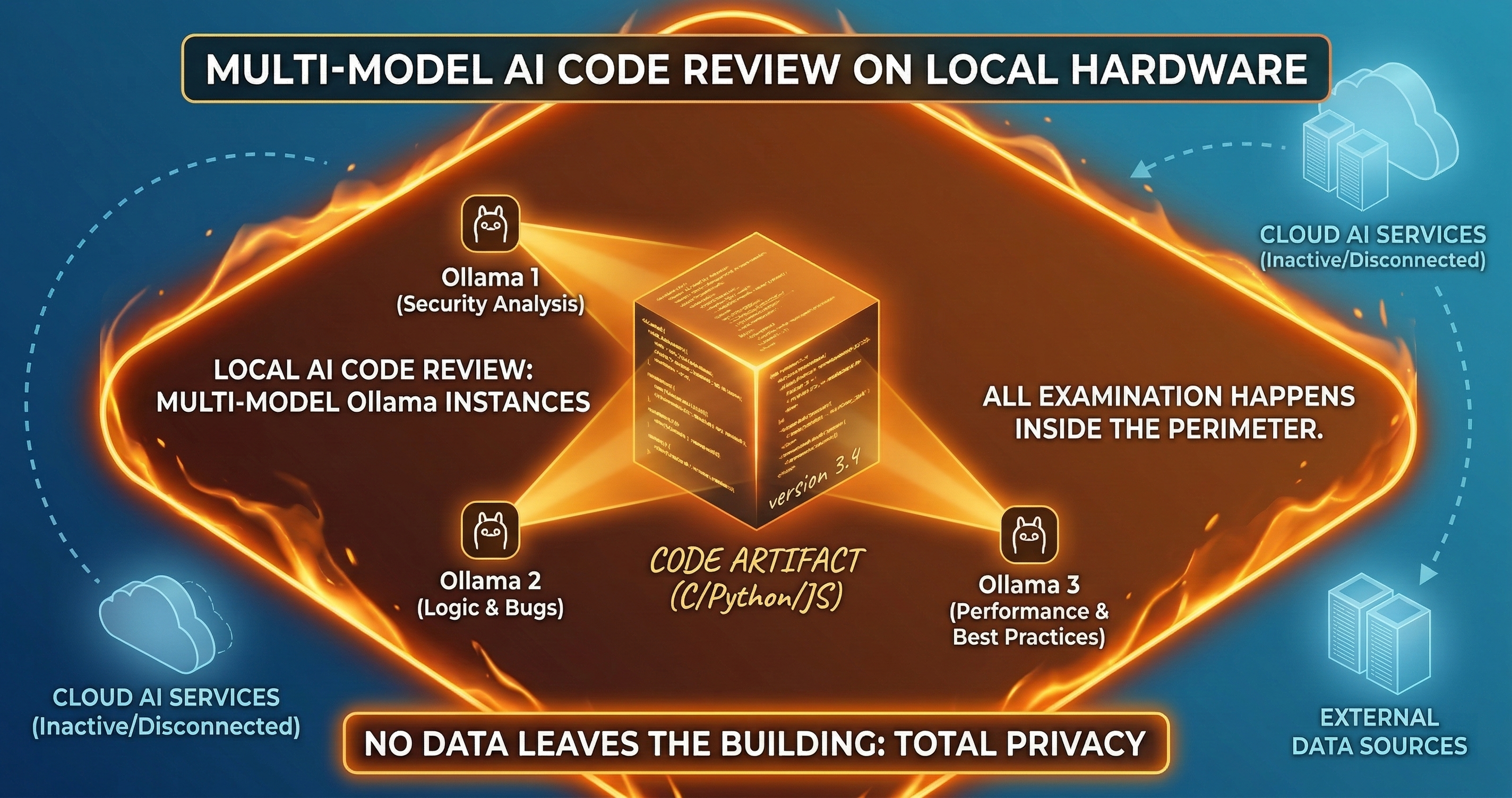

The Local Council: AI Code Review Without Sending Code Externally

Your CISO says no code can leave the network. Your VP Engineering wants AI code review. These aren't contradictory -- they just require a different architecture.

Your CISO says no code can leave the network. Your VP Engineering wants AI code review. These aren’t contradictory — they just require a different architecture.

Most AI code review tools send your diffs to an external API. Claude, GPT, Gemini — they all run on someone else’s infrastructure. For many teams, that’s fine. For defense contractors, healthcare systems, financial institutions, and anyone with strict data residency requirements, that’s a non-starter.

The Local Council runs entirely on your hardware.

The Data Residency Problem

Data residency isn’t just a preference. It’s a legal requirement for specific industries and jurisdictions.

ITAR-regulated defense contractors cannot send controlled technical data to servers outside their approved environment. HIPAA-covered entities must ensure PHI doesn’t leave their compliant infrastructure without a BAA. European organizations under GDPR face restrictions on data transfers outside the EU. Financial institutions under SOX and GLBA have data handling requirements that their compliance teams interpret conservatively.

When your compliance team says “no external APIs,” they’re not being difficult. They’re reading the regulation correctly. The question isn’t whether they’re right. The question is whether you can get AI code review without violating the constraint.

How the Local Council Works

The Local Council is a multi-model review system that runs on-premise. Multiple LLMs review the same diff independently, vote on findings, and reach consensus — all within your network boundary.

Step 1: Model Selection. You configure which models participate. Ollama is the primary local provider, supporting models like CodeLlama, DeepSeek Coder, Mistral, and Llama variants. Any model that runs on your hardware qualifies. The council requires at least two models for meaningful consensus — three is better.

Step 2: Independent Review. Each model receives the diff and reviews it independently. No model sees another model’s output during review. This matters because independent reviews catch different classes of issues. A model trained on security data catches vulnerabilities. A model trained on general code catches logic errors. A model fine-tuned on your codebase catches convention violations.

Step 3: Voting. Each model produces a ReviewVote:

interface ReviewVote {

model: string;

provider: string;

decision: "approve" | "request_changes" | "comment";

confidence: number;

findings: Finding[];

timestamp: string;

}

The vote includes the model’s decision, its confidence level, and specific findings with line references. Every vote is recorded in the evidence bundle regardless of the outcome.

Step 4: Consensus. The votes are aggregated according to your configured consensus type.

Consensus Types

Four consensus types let you calibrate the council’s strictness based on your risk tolerance.

UNANIMOUS. Every model must agree. If one model requests changes, the PR gets flagged. This is the strictest mode. Use it for L3-L4 risk tier changes: authentication, authorization, cryptography, payment logic. The cost is occasional false positives. The benefit is that nothing slips through.

SUPERMAJORITY. Two-thirds of models must agree. A three-model council needs two agreements. A six-model council needs four. This filters out single-model false positives while maintaining strong coverage. Good default for most teams.

MAJORITY. More than half must agree. Simpler threshold, faster decisions. Use it when you want AI review as a signal but not a gate. Teams that are transitioning from no-review to reviewed often start here.

PLURALITY. The most common vote wins. Even if only two out of five models agree on “approve” and the other three each have different objections, the approval wins. This is the loosest mode. I only recommend it for L0-L1 changes where you want AI commentary without blocking.

Provider Support

The Local Council isn’t limited to Ollama. It supports multiple providers with different deployment characteristics.

Ollama (Primary Local). Runs on your hardware. Supports quantized models that fit on consumer GPUs. A 7B parameter model runs on a single GPU with 8GB VRAM. A 34B model needs more, but the quality jump is significant. No data leaves your machine.

Anthropic, OpenAI, OpenRouter. Cloud providers for teams that can use external APIs for some code. You might run the Local Council with Ollama for your core product and add Claude for your open-source projects. The council architecture lets you mix local and cloud providers in the same system.

MCP (Model Context Protocol). Any model accessible via MCP can participate. If your organization runs a private model behind an MCP server, the council can use it. This is how large enterprises with custom fine-tuned models plug into the system.

Hooks. Custom review hooks let you add non-AI reviewers to the council. A static analysis tool can cast a vote. A linter can cast a vote. Your existing tooling participates in the same consensus process as the AI models.

Quorum Validation

A council with two models where one is offline isn’t a council. It’s a single reviewer with extra steps. Quorum validation prevents this.

Before any review begins, the system verifies that enough models are available to meet the configured consensus threshold. If you require SUPERMAJORITY with three models and only one responds to the health check, the review doesn’t proceed. It fails with an explicit error: “quorum not met, 1 of 3 required models available.”

This prevents the silent degradation that happens when infrastructure fails quietly. You don’t discover three months later that your “multi-model review” was actually a single model rubber-stamping everything because the other two were down.

interface QuorumConfig {

minimum_models: number;

consensus_type: "UNANIMOUS" | "SUPERMAJORITY" | "MAJORITY" | "PLURALITY";

timeout_seconds: number;

fallback_on_timeout: "block" | "flag" | "pass";

}

The fallback_on_timeout setting controls what happens when a model takes too long. “Block” stops the PR. “Flag” allows it but marks the evidence bundle as incomplete. “Pass” lets it through — use this only for L0 changes where blocking costs more than risk.

The Evidence Bundle

Every council review produces an evidence bundle. The bundle contains:

- The original diff

- Each model’s independent ReviewVote

- The consensus calculation with the threshold used

- The final CouncilResult

- Timestamps and model versions for reproducibility

- A hash chain linking this evidence to previous reviews

interface CouncilResult {

consensus: "approve" | "request_changes" | "no_consensus";

consensus_type: string;

votes: ReviewVote[];

quorum_met: boolean;

models_available: number;

models_required: number;

evidence_hash: string;

timestamp: string;

}

The CouncilResult is the audit artifact. When a compliance auditor asks “who reviewed this change and what did they find?” — here’s the answer. Not “Dave approved it on a Friday afternoon.” Instead: three models reviewed it independently, two approved with high confidence, one flagged a potential issue that was addressed in a follow-up commit, and the evidence is tamper-evident.

Deployment Architecture

The Local Council runs as a sidecar process or a dedicated service within your network. It doesn’t need internet access. It doesn’t phone home. It doesn’t collect telemetry.

Single Machine. Install Ollama, pull two or three models, configure the council. Reviews run on the same machine as your CI/CD. Good for small teams and evaluation.

Dedicated Server. A GPU server running Ollama with larger models. CI/CD jobs call the council service over your internal network. Better performance, better model quality, and the GPU cost is shared across all repositories.

Kubernetes. The council runs as a service in your cluster. Models are pre-loaded on GPU nodes. Horizontal scaling handles review load during peak PR times. This is how enterprises with hundreds of developers run it.

In all three cases, no code leaves your infrastructure. The review happens where the code lives.

What You Give Up

I won’t pretend local models match cloud frontier models on every task. They don’t. GPT-4 and Claude catch more subtle logic errors than a 7B parameter local model. The gap narrows with larger local models (34B, 70B), but it doesn’t disappear.

What you gain is data residency compliance, full control over the review pipeline, no per-API-call costs, and deterministic infrastructure that doesn’t depend on someone else’s uptime.

The council architecture compensates for individual model quality with quantity and diversity. Three mediocre reviewers who disagree on a finding are still more useful than one excellent reviewer you’re not allowed to use.

Making the Business Case

Your CISO doesn’t care about model quality comparisons. They care about data residency compliance. Your VP Engineering doesn’t care about compliance frameworks. They care about catching bugs before production.

The Local Council gives both of them what they want without either compromising. The CISO gets an architecture diagram showing zero external data flows. The VP Engineering gets multi-model AI code review with evidence bundles.

The common objection is cost. GPU hardware isn’t free. But compare it to the cost of a data breach in a regulated industry ($4.45M average, $10.93M for healthcare). Compare it to the cost of manual code review at scale. A single GPU server running three Ollama models costs less per year than one additional senior engineer doing full-time code review.

The compliance evidence is a bonus. Most teams adopting the Local Council came for the data residency. They stayed for the evidence bundles.

Running in a regulated environment where code can’t leave your network? Book a call and I’ll walk you through the Local Council setup for your infrastructure.