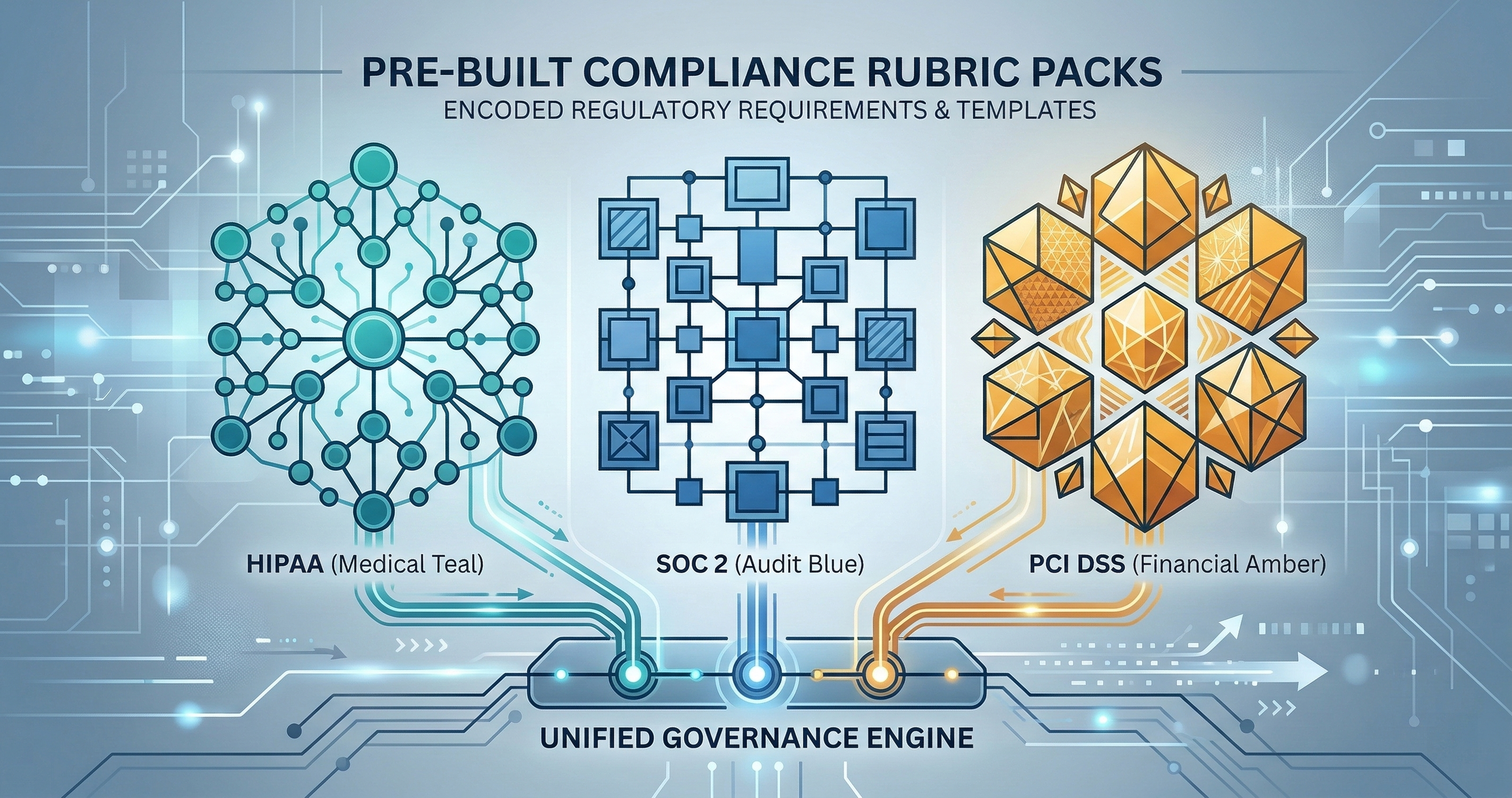

HIPAA/SOC2/PCI-DSS: Compliance Rubric Packs

Your HIPAA compliance program generates evidence from manual processes. When 60% of changes are AI-assisted, rubric packs generate the evidence automatically.

Your HIPAA compliance program generates evidence from manual processes. Someone reviews a change. Someone writes a note. Someone files the note in a folder that an auditor will open six months later and squint at.

What happens when 60% of the changes are AI-assisted and nobody manually reviewed them? The rubric pack generates the evidence automatically.

The Compliance Evidence Problem

Every regulated industry has the same pattern. A framework exists (HIPAA, SOC2, PCI-DSS). The framework defines requirements. Requirements become checklists. Checklists become someone’s job. That someone fills out forms after the fact, reconstructing what happened from memory and git logs.

And now add the EU AI Act to that list. Enforcement hits August 2, 2026, and procurement teams are already adding AI governance requirements to RFPs. If your compliance evidence for AI-assisted changes is “someone reviewed it, probably,” that answer has an expiration date.

This worked when humans made every change. You could interview the person who made the change. You could ask why they chose that approach. The change carried context because a human carried it.

AI-assisted changes don’t carry context. The model doesn’t remember why it chose that approach. The developer who prompted it may not fully understand the output. The gap between “change happened” and “evidence exists” widens with every AI-assisted commit.

Manual evidence collection doesn’t scale to AI-speed development. You need evidence generation that runs at the same speed as the changes.

What a Rubric Pack Is

A rubric pack is a YAML file that encodes compliance requirements as machine-evaluable rules. Not a checklist. Not a guideline document. A set of conditions that can be evaluated against a diff, a file, or an artifact automatically.

Here’s the structure:

rubric_pack:

framework: HIPAA

version: "1.0.0"

rules:

- id: hipaa-phi-001

name: PHI Field Access Control

description: Any code accessing PHI fields must include access control checks

severity: critical

match:

patterns:

- "patient_name"

- "date_of_birth"

- "ssn"

- "medical_record"

require:

- access_control_check

- audit_log_entry

evidence_fields:

- reviewer_model

- access_control_method

- audit_log_destination

The rubric says: if a change touches a PHI field, require access control and audit logging. If those aren’t present, the change fails the rubric. If they are, the evidence bundle records exactly which controls were applied, which model reviewed it, and where the audit log goes.

No human had to fill out a form. The evidence was generated by the evaluation.

Three Framework Packs

HIPAA: Protected Health Information

HIPAA compliance cares about five things when code changes: PHI handling, access controls, audit trails, encryption at rest and in transit, and breach notification mechanisms.

The HIPAA rubric pack maps these to concrete code patterns:

PHI Handling Rules. Any function that reads, writes, or transmits fields classified as PHI must have corresponding access control. The rubric matches on field names, variable names, and database column references. It doesn’t just check if the word “patient” appears — it evaluates whether the code path includes authorization checks before the data is accessed.

Access Control Rules. Role-based access control must exist on every PHI endpoint. The rubric checks for middleware, decorators, or guard functions on routes that handle PHI. A raw database query against a patient table without an RBAC check fails immediately.

Audit Trail Rules. Every PHI access must generate an audit log entry. The rubric looks for logging calls co-located with data access. It checks that the log includes who accessed the data, when, and for what purpose. HIPAA requires a six-year audit trail. The rubric ensures the log format supports that retention.

Encryption Rules. PHI at rest must be encrypted. PHI in transit must use TLS. The rubric checks database connection strings for encryption parameters and API endpoints for HTTPS enforcement.

SOC2: Trust Service Criteria

SOC2 operates on five trust service criteria: security, availability, processing integrity, confidentiality, and privacy. The rubric pack maps each criterion to code-level evidence.

Security (CC6). Authentication mechanisms must exist. The rubric checks for multi-factor auth configuration, session management, and credential storage patterns. Hardcoded credentials fail immediately. Secrets loaded from environment variables pass.

Availability (CC7). Health check endpoints must exist. The rubric checks for /health or /readiness routes. It verifies that error handling includes retry logic or circuit breakers. A service that crashes on transient database errors fails the availability rubric.

Processing Integrity (CC8). Input validation must exist on all external-facing endpoints. The rubric checks for schema validation, type checking, and sanitization. Raw user input passed directly to a database query is an instant fail.

Confidentiality (CC9). Data classification must be present. The rubric checks that sensitive fields are marked, that logging excludes sensitive data, and that API responses don’t leak internal identifiers.

PCI-DSS: Payment Card Industry

PCI-DSS is prescriptive. The rubric pack reflects that specificity.

Cardholder Data (Requirement 3). The rubric matches on patterns that indicate credit card numbers, CVVs, and expiration dates. Any code that stores these values must use tokenization or encryption. Storing raw card numbers in any form — database, log file, cache — is an automatic critical failure.

Network Segmentation (Requirement 1). Code that configures network rules must enforce segmentation between cardholder data environments and everything else. The rubric checks firewall rule definitions, security group configurations, and network policy manifests for proper isolation.

Access Management (Requirement 7). The principle of least privilege must be enforced. The rubric checks IAM policy definitions for overly broad permissions. A policy that grants * access to a resource containing cardholder data fails.

Monitoring (Requirement 10). Every access to cardholder data must be logged. The rubric checks for audit logging on all payment-related endpoints. Log entries must include timestamp, user identity, action performed, and resource accessed.

How Evidence Gets Generated

When a developer opens a PR that touches code in a regulated area, the flow is:

- The diff is extracted and parsed into change units.

- The rubric pack evaluates each change unit against applicable rules.

- For each rule, the evaluation produces a PASS, FAIL, or NEEDS_REVIEW result.

- Pass results generate evidence entries automatically.

- Fail results block the merge and explain why.

- NEEDS_REVIEW results get escalated to a human reviewer with context about what the rubric expected.

The evidence entry looks like this:

{

"rule_id": "hipaa-phi-001",

"result": "PASS",

"timestamp": "2026-03-24T14:30:00Z",

"change_unit": "src/api/patient.ts:getPatientRecord",

"evidence": {

"access_control_method": "rbac_middleware",

"audit_log_destination": "cloudwatch/phi-access-logs",

"reviewer_model": "claude-sonnet-4-6",

"review_confidence": 0.94

},

"hash": "sha256:a1b2c3..."

}

That entry gets sealed into the evidence bundle with a hash chain. An auditor six months later can verify that the evidence wasn’t tampered with. They can see exactly which rule was evaluated, what the AI reviewer found, and why the change was approved.

Custom Rubric Creation

The three framework packs cover the common cases. But every organization has rules specific to their business. Custom rubrics extend the pack format.

You define your rules in the same YAML structure. Match patterns identify the code areas you care about. Requirements define what must be present. Evidence fields specify what gets recorded.

A biotech company might add:

- id: custom-gxp-001

name: GxP Data Integrity

description: Changes to lab data processing must maintain ALCOA+ principles

severity: critical

match:

paths:

- "src/lab/**"

- "src/data-processing/**"

require:

- data_integrity_check

- attribution_metadata

evidence_fields:

- data_source

- processing_step

- attribution_user

A fintech company might add rules about transaction amount limits, currency conversion accuracy, or reconciliation checks. The framework is the same. The specifics are yours.

Export Formats

Evidence that lives only in your CI/CD system isn’t evidence an auditor can use. The rubric system exports in three formats.

JSON. Machine-readable, suitable for importing into GRC platforms, SIEM systems, or your own compliance dashboard. Every evidence entry includes its hash chain reference for verification.

SARIF. The Static Analysis Results Interchange Format is the industry standard for tool output. Your existing security tooling already reads SARIF. The rubric evidence slots directly into your existing workflow without another dashboard to check.

ZIP. A portable evidence bundle containing the raw evidence, the rubric that generated it, the diff that was evaluated, and the hash chain for verification. Hand this to an auditor. They can verify it offline without access to your systems.

What This Changes

The old model: change happens, someone fills out a form, form goes in a folder, auditor reviews the folder. Latency: weeks to months. Accuracy: whatever the form-filler remembered.

The new model: change happens, rubric evaluates the change, evidence is generated, evidence is sealed and exported. Latency: seconds. Accuracy: whatever the code actually does, verified by multiple AI reviewers.

The rubric pack doesn’t replace your compliance team. It replaces the manual evidence generation that your compliance team spends 60% of their time on. They stop filling out forms and start reviewing the edge cases that rubrics flag as NEEDS_REVIEW.

Your auditor gets better evidence. Your compliance team gets more interesting work. Your development velocity doesn’t drop every time audit season arrives.

The evidence exists because the system generated it. Not because someone remembered to document it.

Building in a regulated industry and wondering how rubric packs map to your specific framework? Book a call and bring your compliance requirements. I’ll show you how the rubric system handles them.