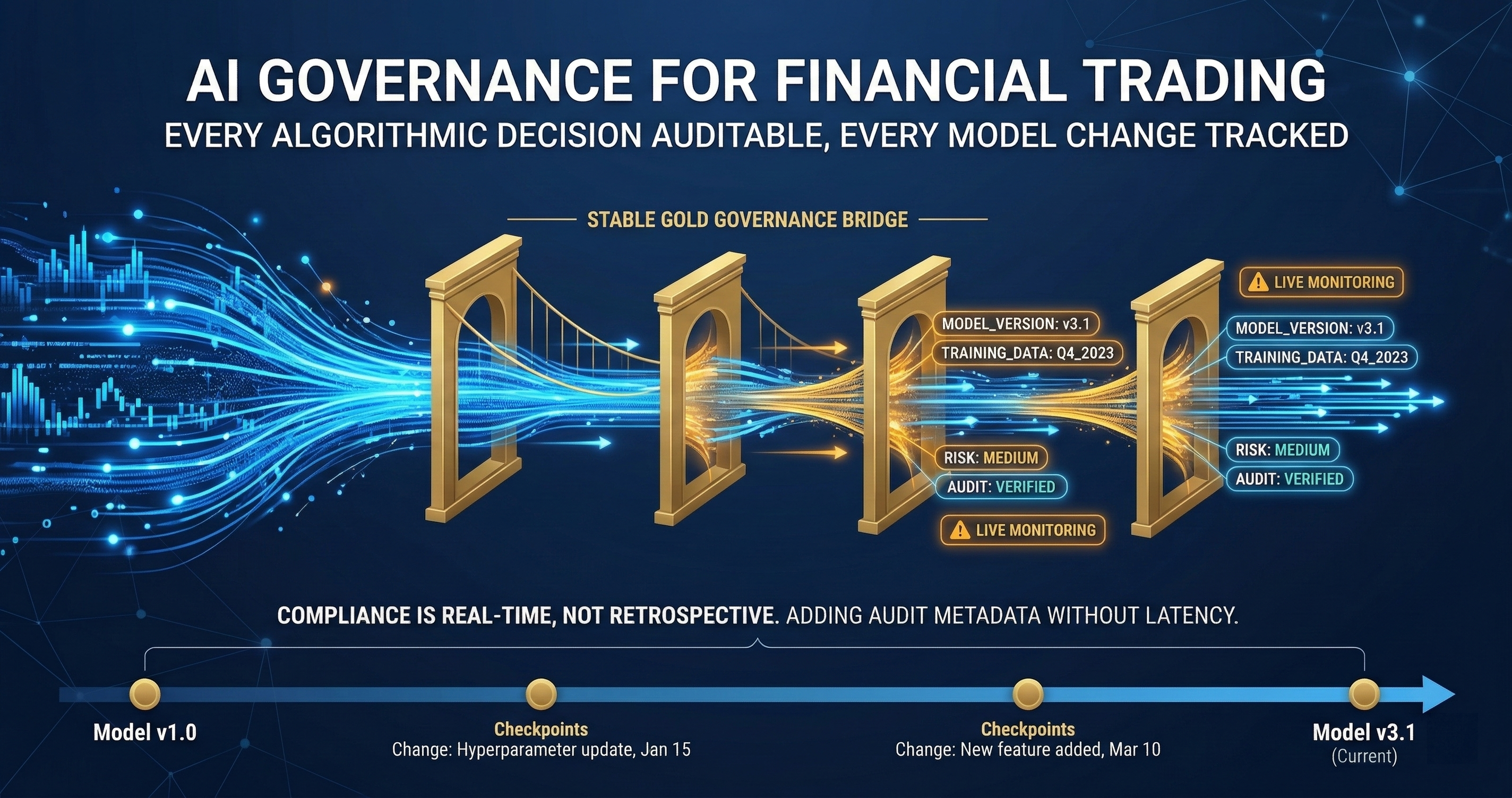

GuardSpine for Finance: Governing AI in Trading and Compliance

Every trading algorithm change needs a paper trail. Every compliance report needs an audit log. Most firms do this manually. That doesn't scale.

Every trading algorithm change needs a paper trail. Every compliance report needs an audit log. Every financial model spreadsheet needs diff tracking. Most firms do this manually. That doesn’t scale.

I built a trading system with 13 capital gates, circuit breakers, and a -2% daily loss kill switch. The system works. But the governance around the system — tracking who changed what, when, and why — was duct tape. Git commits and Slack threads. The kind of evidence that makes a regulator frown.

Financial services has the strictest governance requirements of any industry. It also has the most to gain from getting governance right.

The Regulatory Landscape

Financial institutions operate under overlapping regulatory frameworks. Each one has specific requirements for change management, audit trails, and model governance.

SR 11-7 (Federal Reserve). The supervisory guidance on model risk management. Every model used for a business decision must have documented development, validation, ongoing monitoring, and change management. When AI writes or modifies a model, every requirement in SR 11-7 still applies. The model doesn’t get a pass because a machine wrote it.

SOX Section 404. Internal controls over financial reporting. Changes to systems that produce financial reports must be documented, approved, and tested. An AI that modifies a revenue recognition calculation triggers the same change control process as a human developer.

GLBA (Gramm-Leach-Bliley). Safeguards for customer financial information. Code that handles customer data must enforce access controls, and changes to that code must be tracked.

MiFID II (EU) / Reg SCI (SEC). Requirements for algorithmic trading systems including pre-trade risk controls, kill switches, and change management for trading algorithms.

The common requirement across all of them: when something changes, you must know what changed, who approved it, and what evidence supports the approval. GuardSpine generates that evidence automatically.

Three Attack Surfaces in Finance

Trading Algorithm Changes (CodeGuard)

A quant modifies a signal calculation. A developer changes the order routing logic. An AI suggests an optimization to the execution algorithm. Each of these changes can move money. Each needs governance.

CodeGuard classifies trading code changes by risk tier:

L0-L1: Logging changes, comment updates, test additions. Auto-approve with evidence.

L2: Configuration changes to existing strategies. Non-critical parameter adjustments. Notify the risk team, generate evidence, allow merge.

L3: New signal logic, changes to position sizing, modifications to risk limit calculations. Multi-model council review. At least two AI reviewers must agree before a human approves.

L4: Changes to order routing, execution logic, kill switch mechanisms, or margin calculations. Full council review, mandatory human approval from a senior quant or risk officer, evidence bundle sealed and archived.

The risk tier classification isn’t arbitrary. It maps to regulatory expectations. SR 11-7 distinguishes between material and immaterial model changes. L3-L4 maps to material changes. L0-L2 maps to immaterial changes. The evidence bundle records the classification rationale.

For algorithmic trading specifically, every code change that affects order generation or risk management must produce evidence that can be presented to regulators during an examination. The evidence bundle format — including the diff, the review, the approval, and the hash chain — satisfies this requirement.

Compliance Reports (PDFGuard)

Quarterly filings. Annual reports. Risk disclosures. Regulatory correspondence. Financial institutions produce enormous volumes of documents that are reviewed by regulators.

When AI assists with drafting these documents, PDFGuard tracks what the AI contributed and what a human modified. A compliance officer who uses AI to draft a SAR (Suspicious Activity Report) can show exactly which sections were AI-generated, which were human-edited, and who approved the final version.

The evidence matters for liability. If a regulatory filing contains an error, the question is: who wrote it, who reviewed it, and what process was followed? PDFGuard answers all three questions with timestamped, tamper-evident evidence.

Document versioning in finance isn’t optional. It’s examined. During a regulatory exam, the examiner will ask to see the change history of specific documents. PDFGuard evidence bundles provide that history at the paragraph level, not just the file level.

Financial Model Spreadsheets (SheetGuard)

The finance industry runs on spreadsheets. Valuation models, risk calculations, forecasting tools, reconciliation workbooks. Many of these spreadsheets feed directly into regulated processes.

SheetGuard captures cell-level changes:

{

"cell": "D14",

"old_value": "=B14*C14*0.035",

"new_value": "=B14*C14*0.042",

"type": "formula_modification",

"impact": ["D15", "D16", "E14", "F22"],

"risk_assessment": "L3 - modifies interest rate assumption feeding P&L projection"

}

A single cell change in a risk model can cascade through dozens of dependent cells. SheetGuard traces the impact chain. The evidence bundle shows not just what changed, but what downstream calculations were affected.

For model risk management under SR 11-7, this is the change documentation. The model changed. Here’s exactly what changed. Here’s what it affects. Here’s who approved it. Here’s the evidence.

Model Risk Management Artifacts

SR 11-7 requires specific artifacts for model governance. GuardSpine evidence bundles map to these artifacts directly.

Model Development Documentation. When AI assists in building a model, CodeGuard captures every code change, every review, and every approval. The accumulated evidence bundles form the development documentation.

Model Validation Evidence. The council review process produces independent assessments from multiple AI models. These assessments, combined with human review, constitute validation evidence. The disagreements between council members are particularly valuable — they highlight areas where additional validation testing is warranted.

Ongoing Monitoring Records. Scheduled governance workflows (via n8n integration) can run periodic reviews of model code, flagging drift from validated parameters. The evidence from these periodic reviews forms the ongoing monitoring record.

Change Management Records. Every modification to a model, whether made by a human or assisted by AI, produces an evidence bundle. The hash chain links sequential changes, creating an unbroken audit trail from initial development through every subsequent modification.

The Trading System Pattern

I run a trading system built on dual momentum and antifragility principles. Thirteen capital gates scale from $200 to $10M. Circuit breakers halt trading on -2% daily loss or 10% drawdown.

Here’s how governance maps to this architecture:

Gate transition changes (L4). Moving capital from one gate to the next changes the risk profile. Every gate transition modification requires council review and human approval. The evidence bundle records the gate parameters, the review findings, and the approval.

Signal calculation changes (L3). The momentum signals determine what gets bought and sold. Changes to signal logic get multi-model review. The council catches edge cases that a single reviewer misses — boundary conditions in lookback periods, division-by-zero scenarios in momentum calculations, timezone handling in market data.

Circuit breaker modifications (L4). The kill switch is the last line of defense. Any change to circuit breaker logic requires unanimous council review plus approval from two humans. The evidence bundle for these changes is the most detailed in the system.

Backtesting infrastructure (L2). Changes to backtesting code affect how you evaluate strategy performance. Important, but not directly risk-bearing. Council review, notification, evidence.

Monitoring and logging (L0-L1). Changes to how the system reports its own behavior. Auto-approve with evidence. Low risk, high frequency.

Evidence as Examination Preparation

Regulatory examinations in finance are not hypothetical. They happen on a schedule. OCC exams, Fed exams, SEC exams, FINRA exams. When the examiner arrives, they ask for documentation.

The traditional approach: spend weeks before the exam pulling together evidence from git logs, Jira tickets, email threads, and the memories of people who may or may not still work at the firm. Assemble it into a binder. Hope it’s complete enough.

The GuardSpine approach: the evidence already exists. Every change produced an evidence bundle. Every bundle is sealed and verifiable. Export the bundles for the examination period. Hand them to the examiner. The evidence is more complete, more accurate, and more verifiable than anything assembled from memory.

Examination preparation time drops from weeks to hours. More importantly, the evidence quality improves. Examiners notice when evidence is reconstructed versus contemporaneous. GuardSpine evidence is always contemporaneous — it’s generated at the time of the change.

Cost of Getting It Wrong

Financial institutions face specific costs for governance failures:

Regulatory fines. OCC consent orders, SEC enforcement actions, FINRA fines. These range from millions to billions depending on the violation. Wells Fargo, Deutsche Bank, JPMorgan — the headlines speak for themselves.

Trading losses from ungoverned changes. Knight Capital lost $440 million in 45 minutes due to a deployment error in trading code. Governance wouldn’t have prevented the bug, but it would have caught the deployment process failure. The code change that caused the loss bypassed normal review procedures.

Model risk events. An unvalidated model change that produces incorrect risk calculations can lead to positions that exceed risk limits. The loss comes from the position. The regulatory consequence comes from the governance failure.

Reputational damage. Clients, counterparties, and regulators all assess whether a firm has adequate controls. A governance failure that becomes public affects business relationships across the organization.

The cost of GuardSpine is a rounding error compared to any of these scenarios. The evidence bundles are insurance against governance failures that have measurable, often enormous, financial consequences.

Starting in Finance

Start with your highest-risk code: trading algorithms and risk models. Install CodeGuard on those repositories. Configure L3-L4 risk tiers for any change that affects order generation, risk calculation, or regulatory reporting.

Add SheetGuard for the financial models that feed your regulatory filings. Cell-level change tracking with evidence bundles replaces the manual process of documenting spreadsheet changes.

Add PDFGuard for compliance documents. Every AI-assisted draft gets evidence.

The first regulatory exam after deployment is when the investment pays off. Instead of weeks of evidence gathering, you export the bundles. The examiner gets better evidence. Your compliance team gets their weeks back.

Running a financial institution where AI touches trading, risk, or compliance systems? Book a call and bring your regulatory requirements. I’ll map the guard lanes to your examination preparation process.