Vision Models, Deterministic Diffs, and the Moment It All Clicked

How image diffing turned a code review tool into a governance platform. Four guard lanes, deterministic diffs, and the architecture that emerged.

CodeGuard worked. PRs were getting triaged, reviewed by AI councils, sealed into evidence bundles. Then I looked at my desk and realized: the regulatory filing my client was worried about wasn’t code. It was a PDF. The financial model wasn’t code. It was a spreadsheet. The design review wasn’t code. It was images. I needed the same system for everything.

The Desk That Changed the Architecture

I’d spent months building a code review governance system that actually produced evidence. Not “someone clicked approve” — real evidence bundles with deterministic diffs, risk assessments, and sealed audit trails. It was working. Teams were shipping faster because they had proof of review, not just a green checkmark.

But code is maybe 20% of the artifacts that matter in a regulated organization. The other 80% — regulatory filings, SOPs, financial models, clinical images, design specs — those were still governed by “Version 3 FINAL (reviewed by Sarah).docx” and a prayer.

The moment I saw a biotech client’s 510(k) submission get partially rewritten by an AI assistant with zero diff trail, I knew CodeGuard was the wrong scope. The problem was never code review. The problem was change governance for every artifact type.

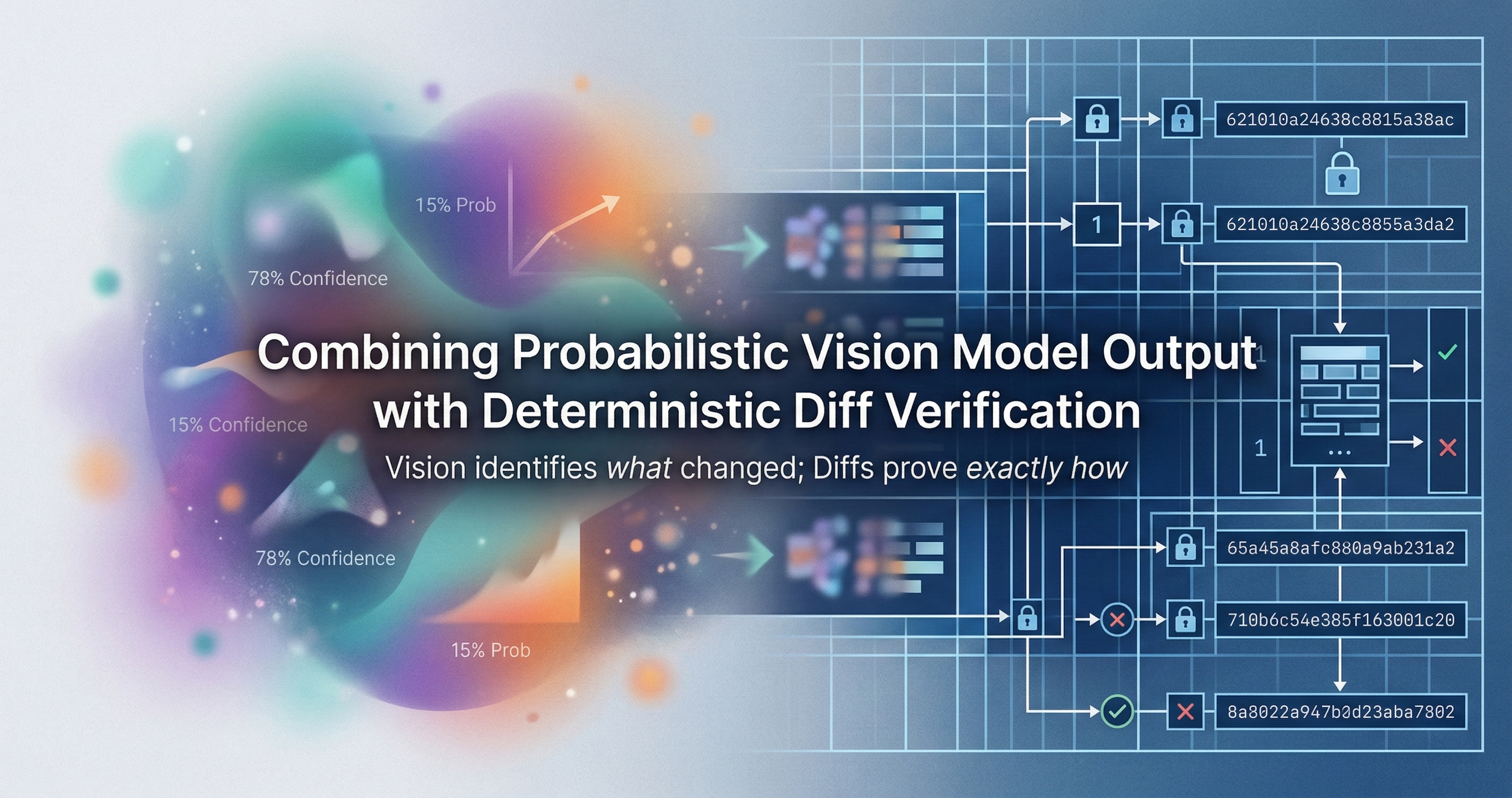

Vision Models Made It Possible. Deterministic Diffs Made It Reliable.

Here’s the thing about GPT-4V, Gemini Pro Vision, and Claude 3.5 Sonnet: they can see. Feed them two versions of a document and they’ll tell you what changed. That’s impressive. It’s also not enough.

Vision models hallucinate. They miss things. They confidently describe changes that didn’t happen. If your governance system depends on an AI model’s opinion of what changed, you don’t have governance. You have an expensive guess.

The pattern I landed on separates the jobs completely: deterministic diffs tell you what changed. AI interpretation tells you why it matters. Human approval decides whether it ships.

Deterministic diff + AI interpretation + human approval = governed change. Remove any leg of that tripod and the whole thing falls over.

PDFGuard: Page-Level Pixel Diffs

PDFs are the worst. They’re not structured data. They’re a rendering format masquerading as a document format. Two PDFs can look identical and have completely different internal representations. Two PDFs can have identical internals and render differently.

So I went pixel-level. PDFGuard renders each page of both versions to images, computes pixel-level diffs, and highlights exactly what moved. That’s the deterministic layer — no AI involved, no interpretation, just math. Red pixels mean something changed. No red pixels mean nothing changed. Binary. Verifiable.

Then the vision model gets the diff overlay and the two source pages. Its job is semantic: “Section 4.2 regulatory language was softened — ‘must comply’ became ‘should consider complying.’” That’s the kind of change that matters enormously in a regulatory filing and is invisible in a track-changes view buried in a 200-page PDF.

The human reviewer sees both: the exact pixels that changed and the AI’s read on what those changes mean. They approve or reject with full context. The evidence bundle seals it all.

SheetGuard: Cell-Level Formula Diffs

Spreadsheets are deceptively dangerous. A single formula change in a financial model can swing a valuation by millions. And unlike code, spreadsheets don’t have pull requests. They have “I emailed you the updated version.”

SheetGuard extracts every cell’s formula and value, then computes exact cell-level diffs. Not “this sheet changed” — “cell D47 changed from =SUM(D2:D46)*0.15 to =SUM(D2:D46)*0.12.” Deterministic. Exact. No ambiguity.

The AI layer reads those formula diffs and explains the business impact: “Tax rate assumption dropped from 15% to 12%, reducing projected liability by $2.3M across the forecast period.” That’s the interpretation a CFO needs. But it’s anchored to the exact cell change, not hallucinated from vibes.

I’ve seen organizations where a single wrong formula in a board deck led to a funding round priced on bad numbers. Nobody caught it because nobody diffed the spreadsheet. They compared the final charts and the charts looked “about right.”

ImageGuard: Pixel Diff + Object Detection

Images were the hardest to get right. A pixel diff of two versions of a circuit board layout produces a red blob. Useful for confirming something changed, useless for understanding what.

ImageGuard runs the pixel diff first — that’s the deterministic anchor. Then it feeds both images and the diff overlay to a vision model with object detection capabilities. The AI reports: “Component U7 moved 2.3mm north. Trace between R14 and C22 was rerouted. New via added at coordinates (34.2, 18.7).”

For biotech teams reviewing histology slides or medical device imagery, this matters enormously. I wrote about this in “Why Biotech Teams Need AI Governance Before AI Tools” — these teams need image governance more than they need code governance. A staining protocol change that’s invisible to the naked eye can invalidate an entire study.

The Architecture That Emerged

GitHub showed the way. Git diff for code was the existence proof. I just needed the same pattern for every artifact type.

The architecture landed on a BaseGuardLane abstract class. Every guard lane — code, PDF, spreadsheet, image — inherits from it and implements three methods: extract the artifact, compute the deterministic diff, and package the evidence bundle. The AI interpretation layer is shared. The human approval workflow is shared. The evidence sealing is shared.

The GuardLaneType enum tells the story of where this is going:

CODE_GUARD -- shipped, production

PDF_GUARD -- shipped, production

IMAGE_GUARD -- shipped, production

SHEET_GUARD -- shipped, production

COMMS_GUARD -- beta

TICKET_GUARD -- beta

DEAL_GUARD -- beta

CONTRACT_GUARD -- beta

DEPLOY_GUARD -- beta

DATA_GUARD -- planned

EVIDENCE_GUARD -- planned

Four lanes live. Five in beta. Two more on the roadmap. Each one follows the same tripod: deterministic diff, AI interpretation, human approval.

Wiring It Together: 11 n8n Nodes

Guard lanes are useless if they don’t plug into existing workflows. Nobody’s going to open a separate app to govern their spreadsheets. The governance has to live where the work happens.

That’s why I built 11 n8n nodes that wire guard lanes into automation workflows. CodeGuard, PDFGuard, SheetGuard, and ImageGuard each have dedicated nodes. A unified GuardGate node handles routing — feed it any artifact and it picks the right lane automatically. The EvidenceSeal node packages everything into a tamper-evident bundle at the end.

A typical workflow: a regulatory document gets uploaded to SharePoint. n8n detects the change, routes it through PDFGuard, the AI interpretation runs, the reviewer gets a Slack notification with the diff and the semantic summary, they approve or reject, and the evidence bundle gets sealed and stored. Total time added to the process: about 90 seconds. Evidence quality compared to “someone reviewed it, trust me”: incomparable.

When It Stopped Being a Tool

This is the moment it clicked. When I had four guard lanes working — code, PDFs, spreadsheets, images — I wasn’t building a code review tool anymore. I was building work provenance infrastructure.

Every meaningful change in an organization produces an artifact. Code, documents, data, designs, contracts, communications. If you can diff it deterministically and interpret it with AI, you can govern it. If you can govern it, you can prove it was governed. If you can prove it was governed, you can survive an audit, a lawsuit, or a regulator asking “who approved this change and why?”

I wrote the manifesto version of this idea in “Everything Is a Diff.” That post was the theory. This is what it looks like when the theory becomes running code.

The four shipped guard lanes handle the artifact types that cover roughly 85% of regulated work product. The five beta lanes cover the next 10%. The remaining 5% will be custom lanes — the BaseGuardLane class makes that a weekend project, not a quarter-long initiative.

The Tripod Holds

Every time I’m tempted to skip the deterministic diff and just let the vision model handle it, I remember the hallucination rate. Every time I’m tempted to skip the AI interpretation and just show raw diffs, I remember the CFO who needs to know “what does this formula change mean for our forecast?” not “cell D47 changed.”

Every time I’m tempted to skip the human approval and just auto-approve low-risk changes, I remember that the whole point is evidence that a human made a decision. Automation without accountability is just faster negligence.

Deterministic diff + AI interpretation + human approval. The tripod holds for code. It holds for PDFs. It holds for spreadsheets. It holds for images. It’ll hold for whatever artifact type comes next.

That’s not a code review tool. That’s governance infrastructure.

See all four guard lanes in action. Book a walkthrough: cal.com/davidyoussef