Multi-Model Council: Why One AI Reviewer Isn't Enough

A single AI model reviewing code has the same problem as a single human reviewer: blind spots. GuardSpine's multi-model council uses Claude, GPT, and Gemini to catch what no single model can.

A single AI model missed a SQL injection in a PR I was testing. The query used string interpolation instead of parameterized inputs. Claude focused on the business logic, which was correct, and treated the database call as a standard pattern. GPT-4 caught it in under two seconds.

That incident ended the debate about single-model review. Here is what replaced it.

The Blind Spot Problem

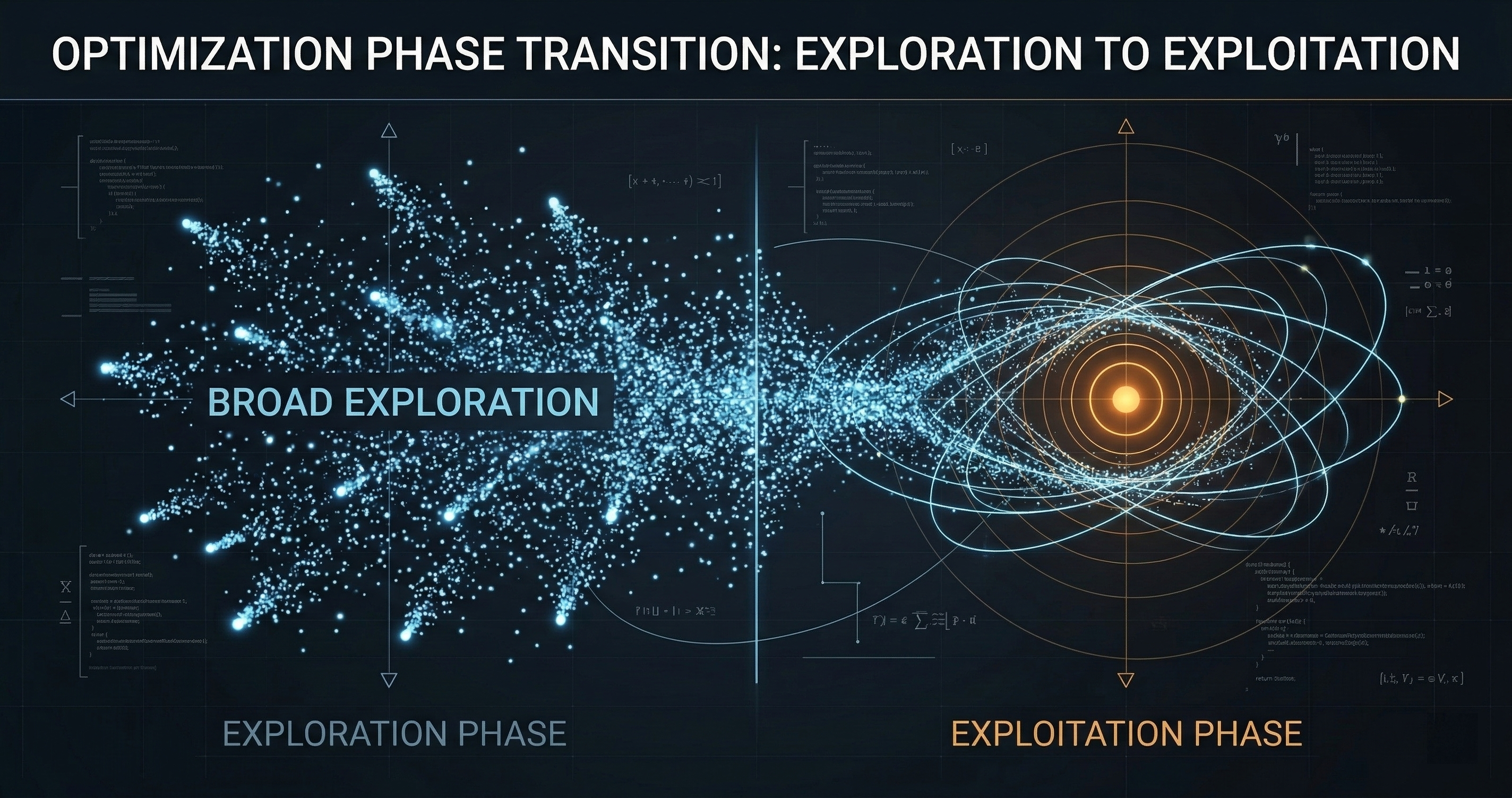

Every AI model has characteristic blind spots. Not random failures — systematic gaps in what they notice and what they skip.

Claude excels at logical reasoning, architectural coherence, and catching subtle control flow bugs. It sometimes underweights performance implications and can normalize patterns it has seen frequently in training data, even when those patterns are dangerous in your specific context.

GPT-4 is strong on security pattern recognition and API misuse detection. It occasionally over-flags stylistic issues as functional problems and can miss cross-file dependency chains that require holding a large context.

Gemini catches dependency version issues, configuration drift, and infrastructure-level concerns that the other models tend to skim past. It is less consistent on nuanced business logic review.

These are not defects. They are characteristics. The same way one human reviewer catches race conditions while another catches UX regressions, different models have different strengths. The fix is the same in both cases: use more than one reviewer.

How the Council Works

GuardSpine’s multi-model council is not a committee that votes. It is a structured review process with independent evaluation, consensus scoring, and documented dissent.

Step 1: Independent review. Each council member receives the same inputs: the diff, the risk classification, and the applicable rules. They do not see each other’s assessments. This prevents anchoring — where one model’s opinion biases the others.

Step 2: Structured assessment. Each model produces a review in a standardized format: verdict, risk score (0.0 to 1.0), specific findings with severity ratings, and a list of which rules were evaluated. The structure ensures comparability.

Step 3: Consensus scoring. The individual scores are aggregated into a consensus score. This is not a simple average. Findings flagged by multiple models receive higher weight. A security concern raised by all three models scores differently than one raised by a single model.

Step 4: Dissent documentation. When models disagree, the disagreement is recorded explicitly in the evidence bundle. If Claude approves but GPT flags a concern, both positions are preserved. Dissent is signal, not noise.

Step 5: Threshold evaluation. The consensus score is compared against the threshold for the change’s risk tier. An L0 change might need a consensus score below 0.5 to pass. An L3 change might need below 0.2, plus unanimous approval on security-related findings.

What the Council Actually Catches

In six months of running multi-model councils on production PRs, I have tracked the detection patterns. The data is clear.

Single-model detection rate for security issues: 73%. Any individual model, on its own, catches roughly three-quarters of the security concerns in a change.

Two-model detection rate: 89%. Adding a second independent reviewer pushes coverage to nearly nine in ten.

Three-model detection rate: 96%. The third model catches the remaining edge cases that the first two miss.

The math is straightforward. If each model independently has a 27% miss rate, and the misses are not perfectly correlated (they are not — different training data, different architectures, different characteristic blind spots), then the probability of all three missing the same issue drops to roughly 2%.

This is the same principle behind Linus’s Law — “given enough eyeballs, all bugs are shallow” — applied to AI reviewers. More independent perspectives means fewer blind spots.

Consensus vs. Unanimity

I deliberately chose consensus scoring over unanimity for a practical reason: unanimity creates a false-positive tax.

If you require all three models to approve before a change can merge, any single model’s over-sensitivity blocks the entire pipeline. GPT flags a stylistic concern as a security issue? Blocked. Gemini misidentifies a safe dependency update as a vulnerability? Blocked. The developer experience degrades until the team disables the system.

Consensus scoring handles this naturally. One model’s false positive is outweighed by two models that correctly identified the change as safe. The noise gets dampened. The signal gets amplified.

But consensus is not majority-rules either. The scoring is weighted by finding severity. A lone security critical finding from one model is not overridden by two models that did not check for that specific pattern. Critical findings require explicit resolution regardless of the consensus score.

Configuration and Control

The council is configurable at multiple levels:

Model selection. Choose which models sit on your council. The default is Claude, GPT-4, and Gemini. Teams running air-gapped environments can substitute local models via Ollama through our local-council configuration.

Per-tier council composition. L0 changes might use a single model. L2 changes use two. L3 changes use all three plus mandatory human review. You control the review depth per risk level.

Custom rules. The rules each model evaluates are yours. GuardSpine ships with sensible defaults for common frameworks, but you define what matters for your codebase. Authentication patterns. Data handling policies. Performance budgets. The models evaluate against your rules, not generic best practices.

Threshold tuning. Consensus thresholds are adjustable per tier. Start strict, measure false positive rates, tune down. The evidence bundles capture every decision, so you can audit your own threshold choices over time.

The Evidence Trail

Every council session produces a complete evidence record. For a three-model review, the bundle contains:

- The diff at review time (content-hashed)

- The risk classification with reasoning

- Three independent review items, each with verdict, score, findings, and rules evaluated

- The consensus computation with individual weights

- Any dissent records

- The final authorization decision

This entire chain is sealed into the evidence bundle’s hash chain. Modify any review, remove a finding, change a score — the chain breaks. The math does not care about politics.

Why Not Just Use the Best Model?

Teams ask me this constantly. “Claude is the best at code review. Why not just use Claude?”

Two reasons.

First, “best” changes. Model capabilities shift with every release. The model that leads today may not lead next quarter. A multi-model architecture is resilient to that change. Swap models in and out without redesigning your governance pipeline.

Second, “best overall” does not mean “best at everything.” Claude might be the best single model for code review in aggregate, but GPT catches specific vulnerability patterns that Claude misses, and Gemini catches infrastructure issues that both miss. You do not want the best average. You want the best coverage.

The council gives you coverage. The evidence bundle proves you have it. That combination is what governance actually looks like.

If you want to see how the multi-model council performs on your codebase, book a session at cal.com/davidyoussef/guardspine. I will run your last ten PRs through the council and show you what each model catches that the others miss.